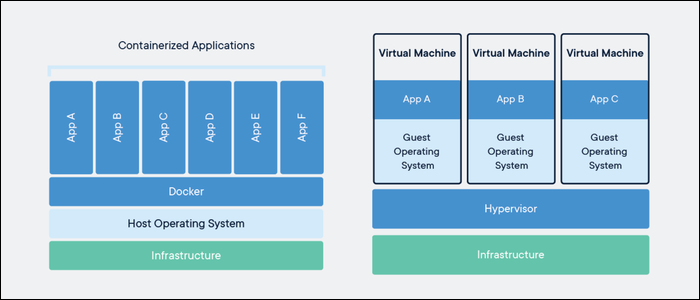

Docker containers provide a similar service to virtual machines, providing an isolated environment for applications to run in, but they're fundamentally two different technologies. We'll discuss the differences, and what makes Docker so useful.

What Makes Docker So Useful?

The main purpose of a virtual machine is to partition a large server into smaller chunks. The important part is that it isolates processes running on each VM. For example, your hosting provider could have a 32 core machine, and split it into eight 4 core VMs that it sells to different customers. This reduces costs for everyone, and they're great if you're running a lot of processes or need full SSH access to the underlying hardware.

However, if you're just running one app, you might be using more resources than necessary. In order to run that single app, the hypervisor has to spin up an entire guest operating system, which means that 32 core machine is running eight copies of Ubuntu. On top of that, you have virtual machine overhead for each instance.

Docker presents a better solution. Docker containers offer isolation without the overhead of virtual machines. Each container runs in its own environment, sectioned off with Linux namespaces, but the important part is that the code in the containers runs directly on the machine. There's no emulation or virtualization involved.

There's still a bit of overhead due to networking and interfacing with the host system, but applications in Docker generally run close to bare-metal speeds, and certainly much faster than your average VPS. You don't have to run 8 copies of Ubuntu, only one, which makes it cheap to run multiple Docker containers on one host. Services like AWS's Elastic Container Service and GCP's Cloud Run provide ways to run individual containers without provisioning an underlying server.

Containers package up all of the dependencies your app needs to run, including libraries and binaries that the OS uses. You can run a CentOS container on an Ubuntu server; they both use the Linux kernel, and the only difference is the included binaries and libraries for the OS.

The main difference with Docker containers is that you generally won't have SSH access to the container. However, you don't exactly need it---the configuration is all handled by the container file itself, and if you want to make updates, you'll need to push a new version of the container.

Because this configuration all happens in code, it allows you to use version control like Git for your server software. Because your container is a single image, it makes it easy to track different builds of your container. With Docker, your development environment will be exactly the same as your production environment, and also the same as everyone else's development environment, alleviating the problem of "it's broken on my machine!"

If you wanted to add another server to your cluster, you wouldn't have to worry about reconfiguring that server and reinstalling all the dependencies you need. Once you build a container, you can easily spin up a hundred instances of that container, without much configuration involved. This also enables very easy Auto Scaling, which can save you a lot of money.

Downsides of Docker

Of course, Docker isn't replacing virtual machines anytime soon. They're two different technologies, and virtual machines still have plenty of upsides.

Networking is generally more involved. On a virtual machine, you usually have dedicated network hardware exposed directly to you. You can easily configure firewalls, set applications to listen on certain ports, and run complicated workloads like load balancing with HAProxy. On Docker, because all the containers run on the same host, this is often a bit more complicated. Usually though, container-specific services like AWS's Elastic Container Service and GCP's Cloud Run will provide this networking as part of their service.

Performance on non-native operating systems is still on-par with virtual machines. You can't run a Linux container on a Windows host machine, so Docker for Windows actually uses a Windows Subsystem for Linux VM to handle running containers. Docker essentially provides a layer of abstraction on top of the virtual machine in this case.

Persistent data is also a bit complicated. Docker containers are designed to be stateless. This can be fixed with volume mounts, which mount a directory on the host to the container, and services like ECS allow you to mount shared volumes. However, it doesn't beat storing data on a regular server, and you wouldn't really want to try and run a production database in Docker.