Quick Links

Everyone's moving their applications to the cloud nowadays. Starting fresh is easy, but what if you have existing infrastructure that you need to migrate? Recreating your network in the cloud offers you a chance to modernize your architecture and make use of the many benefits of cloud providers like AWS.

What Makes The Cloud So Useful?

In its most basic form, the "cloud" is just any Infrastructure-as-a-Service provider that allows you to rent hardware for running your applications on. Many companies run their networks from their own on-premises servers. Renting them from a third party allows greater flexibility and the option to scale as needed.

However, modern cloud providers like Amazon Web Services (AWS), Google Cloud Platform, Microsoft Azure, and Digital Ocean (DO) offer much more than just servers for a fee. They've made it their business to improve the efficiency of their operations and provide developers with easy to use tools that make building applications much easier.

For example, running your servers in the cloud can actually save you money. While dedicated servers will of course will be more expensive core-for-core (as everyone is going to mark up their products somewhat), providers like AWS have advanced auto-scaling systems. These allow you to fully automate your server lifecycle process, creating and destroying new servers as demand fluctuates, often multiple times a day. Instead of paying for peak capacity, you can scale down during off-hours and save money overall.

Setting up auto-scaling also allows you to create additional servers automatically in the event that you experience unexpectedly high load. This makes your network highly scalable, and means that you won't really experience downtime due to high traffic. This scalable mentality applies to all services. For example, AWS's Lambda Functions are infinitely scalable out of the box. Their systems handle running the code for you; no matter how many times you call the function per second, it's not going to bottleneck.

The cloud also saves money through task automation. For example, AWS's Relational Database Service (RDS) is a fully managed SQL service that automates a lot of the job of managing databases. You may already pay someone to do these tasks for you on your own servers; if you were using RDS, that person could manage more databases and spend the rest of their time more efficiently.

And finally, cloud infrastructure is often much more durable than on-premises solutions. This is in part due to services like S3, which are incredibly redundant for data storage, but it also applies to high-availability network design. It's easy to design failover cases where a backup server can take over in the event of a hardware failure. And, in the worst case, backing up everything in your network is very easy, as services like EBS that power the storage for your servers can be configured to automatically back up to S3.

For example, AWS's DNS service, Route 53, supports health checks which monitor your hardware and switch over traffic automatically at the DNS level if a server becomes unresponsive. Auto-scaling groups also support health checks, and can completely terminate and replace a server if it's having problems.

Modernizing Your Architecture With Cloud Solutions

Moving to the cloud is a big step, but with the additional tools provided to you, it gives you a good reason to examine your architecture to see if any pieces of it can benefit from a change in design.

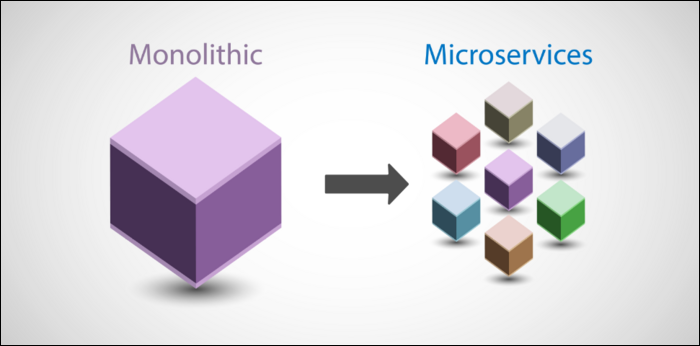

For example, many older applications are designed to be "monolithic," i.e., packaged into one big program that gets run on your server. This program might talk to a local or remote database, handle incoming web requests, perform queries, lookup information, process queues, and everything else that your use case desires.

This can be good for quickly getting stuff up and running, but eventually, it starts to pose a problem---it's not efficient. As long as it's trying to handle many complex tasks at once, there is always going to be one aspect of a large monolithic application that becomes the bottleneck for the rest of the application. What usually happens then is you are forced to scale up, provisioning more servers, and running more instances. This can be incredibly wasteful if the rest of the components in the program aren't being stressed as hard.

So, the solution that many engineers are moving towards is "microservices." These are individual services that each have a clear, fixed goal. Perhaps one element of your web application handles video processing, and it gets stressed particularly hard in comparison when users upload long videos. You could move this part to its own microservice, handle it externally, and simply call it when needed. Now, that component can scale entirely on its own; you may need three servers running the video processing service, but only two servers running the rest of the application. This makes more efficient use of your resources and is a more scalable design overall.

What Services Should I Look Into?

Regardless of whether you opt for a microservices design, other cloud solutions can be very helpful.

We'll discuss some of AWS's services, as they're the industry leader, especially in the number of services they offer. However, most of the major ones have equivalent products available at other cloud providers like Azure, GCP, and Digital Ocean.

Cloud Object Storage (S3)

Most on-premises solutions use block-level storage, meaning objects are stored as files on disks and made available over the network. However, the scale of providers like AWS allows for huge amounts of files to be stored in their Simple Storage Service (S3).

S3 doesn't have traditional folders, though they do have object keys which mostly work like them. Instead of offering direct access to the underlying drive, S3 simply allows you to store a file, in the cloud, with a name and location. That's it, but this simple design pattern allows for great flexibility.

For example, say your application allows user-uploaded content. Storing images in S3 would be a great option, and you can even make them available over the internet using AWS's CloudFront content delivery network.

Switching to S3-based storage is a bit of a process, but there are hybrid solutions, like AWS's Storage Gateway.

Cloud Functions

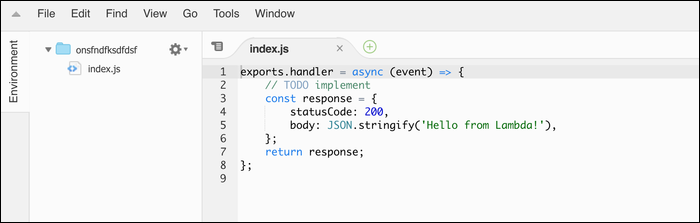

Cloud Functions like Lambda are incredibly useful---they allow you to run code in the cloud without thinking about servers. Simply request a function to be executed, either directly or from an API like AWS's API Gateway, and it'll be queued and ran on Lambda servers.

You simply pay a fee for the amount of CPU seconds and memory your function uses. No matter how much you call the function though, it will scale to handle it.

Cloud functions can easily automate simple tasks in your network. If you have a cron job script that runs on one of your servers, consider moving it to Lambda. Of course, Lambda isn't limited to only simple scripts. It's very powerful and can be used to make robust application backends.

Load Balancers & Auto-Scaling

Load balancers are network devices that split traffic between servers. Traditionally, you'd have to set up a server and configure these yourself using a program like HAProxy. On AWS, they're built into the network, and you simply have to turn them on and pay a monthly fee for one.

Auto-scaling is another feature that builds on top of load balancers. Rather than having your list of servers be static, it's instead based on traffic demands. Servers will be added and removed from the pool as needed.

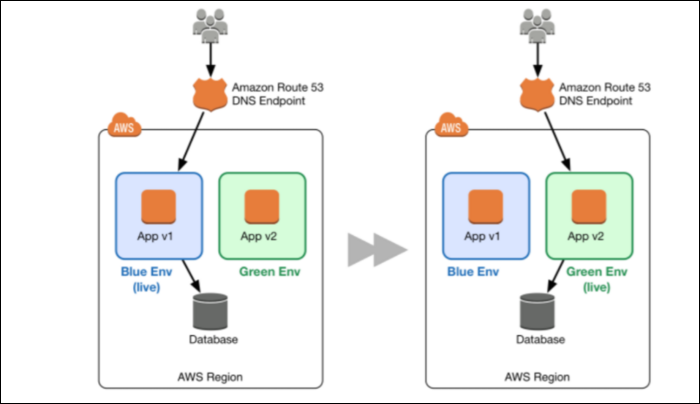

This has a lot of benefits, as we've already covered, but it also has long-reaching impacts on how you use and update your network. Because your server install process is automated, you can do blue/green code deployments, which is when servers are updated by creating entirely new servers, waiting for service to come online, and switching traffic over to them slowly to weed out any issues.

If there's one thing we recommend everyone set up for sure, it's auto-scaling of your major EC2 services.

Automated CI/CD Pipelines

Continuous Integration/Continuous Deployment is the process of setting up an automated build of your application that deploys to the server whenever you make changes in your source control.

Basically, you push a commit to Github (or whatever other repository you're using), and a service like AWS CodePipeline starts up a build server. That server builds and tests your application, and if it's successful, it sends the finished build off to your servers for updating. If you have auto-scaling set up, this can be done with a blue/green deployment with the option for quick and easy rollback if necessary.

Built-In Content Delivery Network (CDN)

Having a CDN can seriously speed up your delivery times. Since AWS is basically a worldwide computing superpower, their CDN has edge nodes all over the globe. Many other cloud providers have similar solutions; Google's Cloud CDN is one of the fastest and most flexible around due to Google actually controlling a lot of the infrastructure making up the internet.

Can You Migrate Without Downtime?

Migrating will be a long and complicated process, but it doesn't have to mean extended downtime. You're likely to have a bit of downtime, but the process can be made fairly seamless.

You can adopt one of two strategies---either move all your servers all at once and switch the entire network over, or move bits and pieces of your applications to the cloud, and update your applications to use the new services.

The second option will result in a hybrid approach and is what most large companies opt to do as it's more cost-effective to only move the things that benefit the most. AWS has many services that work by integrating on-premises hardware with the cloud.

The first option is easy for small deployments and is made simple by services like AWS's Application Migration Service, which can quickly move a fleet of servers onto EC2. You'll likely still need code updates and configuration, but it can move your whole network into a testing environment where you can set everything up, and then perform cutover when you're ready.

Either way, moving to the cloud is a long decision that you should make sure is well researched with a clear plan. Your specific setup will vary wildly, so you'll need to look into the best practices for the kind of applications you're looking to run.