Quick Links

If you're transitioning from AWS to Google Cloud Platform, you might have a lot of data stored in S3 buckets. Luckily, Google provides a tool for automatically transferring the bucket's contents to their own Cloud Storage platform.

Transfering an S3 Bucket to Cloud Storage

Cloud Storage works very similarly to AWS's S3 service, and in most cases it should serve as a drop-in replacement for S3, with some minor tweaking to client applications. Google provides a great guide to migrating S3-based client applications over to Cloud Storage.

However, you'll also need to transfer over each S3 bucket to a Cloud Storage bucket. This process can take a while for large buckets, but it can be automated pretty easily using the data transfer tools built in to GCP.

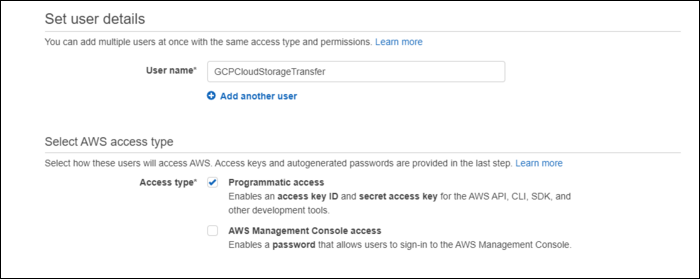

On the AWS side of things, you'll need to create a service user that can access the S3 buckets. You can use an existing one, but making a new one is pretty easy, and can be deleted after the whole process is over. From the IAM Management Console (the AWS one), create a new user, and give it programmatic access, which will create an access key and secret.

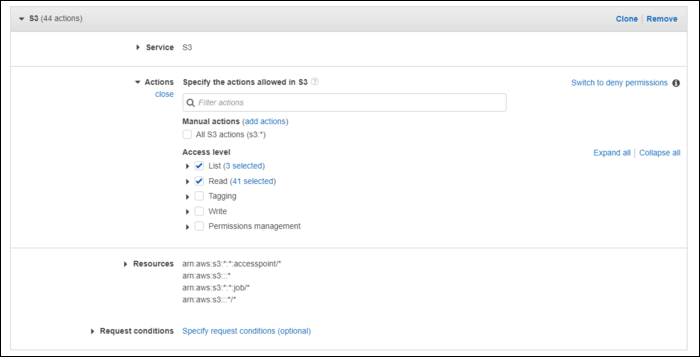

You can give it

AmazonS3FullAccess

, but it's better to create a new policy with read and list permissions for the buckets you will be transfering:

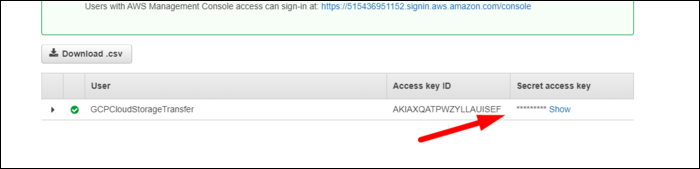

Click next to create the user, and keep the tab with the access key and secret open.

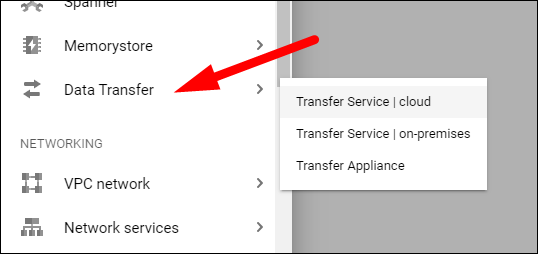

Now, head over to Google Cloud Platform, and select Data Transfer > Transfer Service from the sidebar.

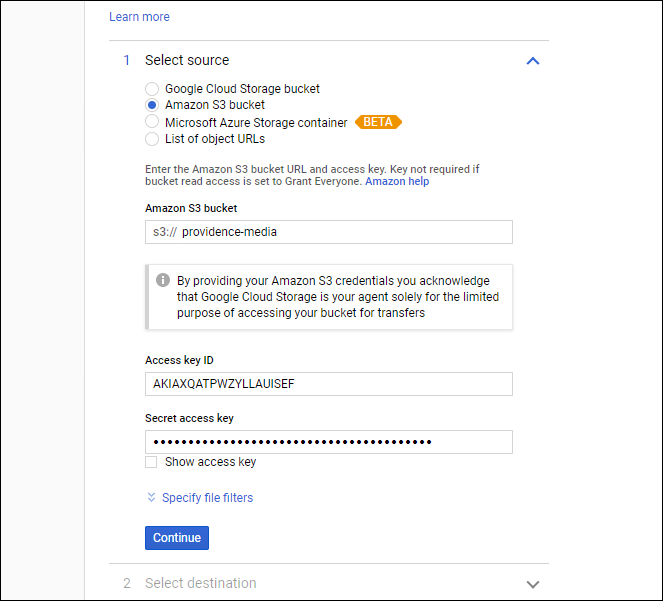

Select "Amazon S3 Bucket," enter the bucket name, and paste in the access key ID.

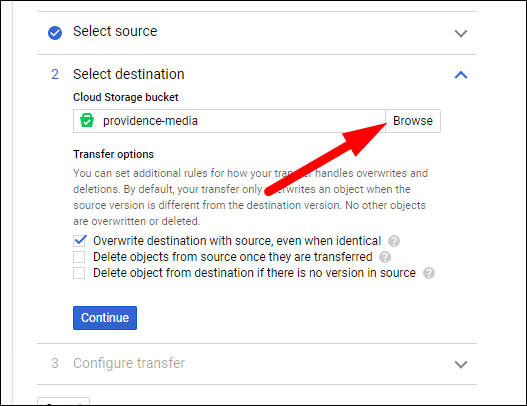

For the destination bucket, you'll likely have to create a new one. Click "Browse," and create a new bucket with the permissions and settings that you would like to use.

You have a few options for the transfer that you can check here. The first will overwrite any existing files in the destination bucket that have the same name. This shouldn't matter much with a new destination bucket. The second will remove items from the source bucket once the transfer is completed. If you're still working on transfering over client applications to the new infrastructure, you'll want to make sure this is unchecked (and if you only gave the IAM user read/list access, this won't work anyway). The third will essentially wipe the destination bucket from anything that isn't in the source bucket. This again shouldn't matter for new buckets.

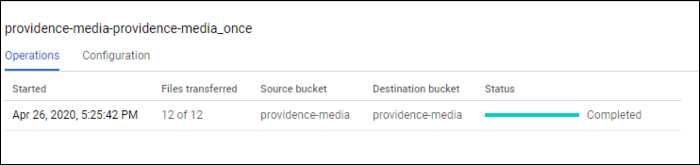

Click "Continue," and click "Create." The transfer should begin automatically. If there are only a few items, it should only take a few minutes. You can view the transfer status from the Data Transfer console:

You will have to repeat this process for each S3 bucket. If you have way too many S3 buckets for that to be feasible, you'll want to look into automating the entire transfer using the Storage Transfer API.