Quick Links

Migrating databases is something every system administrator will have to do at some point. Luckily, MongoDB provides built in commands for creating and restoring from backups, making migration to a new server easier.

Using mongodump to Create a Backup

mongodump is a simple command that will create a backup file of a database and its collections that you can restore from. This will require some downtime while the backup is done and the new server is brought up.

If you don't want downtime, you can do a cluster migration by adding a new node to your cluster, setting that as the primary write source, and then hot swapping over to the new node. This is made much easier if you're using MongoDB Atlas, their managed database service.

mongodump is a lot simpler. You'll need to create a directory for the backups:

sudo mkdir /var/backups/mongobackups

And then run mongodump, passing it in a database parameter and an output location:

sudo mongodump --db databasename --out /var/backups/mongobackups/backup

You can also manually dump specific collections with the --collection flag.

Mongodump can be run on a live database, and only takes a few seconds to create the backup. However, any writes to the database will effectively be lost, since you're moving servers. Because of this, you'll want to disable traffic before creating the dump.

Restoring From Backup

You'll need to transfer the backup file from the old server to the new server. This can be done by downloading it over FTP, then uploading it to the new server, but for large backups it's best to establish a direct connection and transfer it over using scp.

You can use the following command, replacing the usernames and hostnames with values for your servers.

scp user@SRC_HOST:/var/backups/mongobackups/FILENAME user@DEST_HOST:~/FILENAME

Then, once you have the backup on the new server, you can load from the backup. You will of course need MongoDB installed on the new server.

To do so, you can use the mongorestore command:

mongorestore <options> <connection-string> <file to restore>

You should immediately see the new table available in the new database.

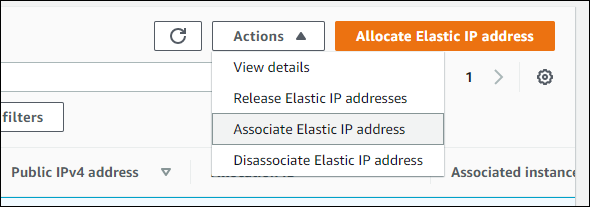

After verifying that everything has transferred over properly, you'll need to swap traffic to the new server, likely by updating your DNS records. If you're on AWS, or a similar provider with Elastic IP addresses, you can swap over the address to point to the new server, which won't require a DNS update.

Transfering The Entire Disk (Optional)

Optionally, if you're just moving to a more powerful server, you can transfer over the entire boot drive, which should copy the database along with the rest of the server configuration.

In this case, you'll want to use the rsync command to directly upload the data to the target server. rsync will connect using SSH and sync up the two folders; in this case, we want to push the local folder to the remote server:

sudo rsync -azAP / --exclude={"/dev/*","/proc/*","/sys/*","/tmp/*","/run/*","/mnt/*","/media/*","/lost+found"} username@remote_host:/

That's the whole command. You should see a progress bar as it completes the transfer (using compression with the -z flag), and when it's done, you'll see the files in the target folder on the new server. You may have to run this multiple times to copy each folder; you can use this online rsync command generator to generate the command for each run.