Quick Links

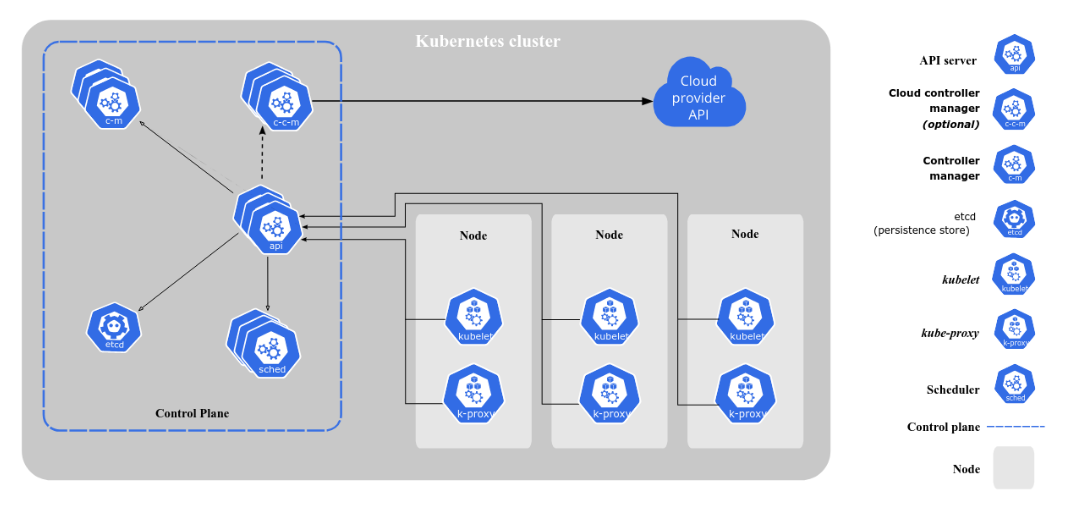

Kubernetes is a container orchestration platform that automates the deployment and scaling of containerized workloads. Kubernetes has gained a reputation for being complex and unwieldy. Here's how individual components combine to form a cluster.

Defining the Cluster

A single Kubernetes installation is termed a "cluster." Within the cluster, there are one or more Nodes available to run your containers. A Node is a representation of a physical machine that has been joined to the cluster.

Kubernetes also has a Control Plane surface. This functions independently of the worker Nodes. The Control Plane is what you interact with. It exposes the Kubernetes API and is responsible for managing the worker Nodes. You don't usually directly manipulate the Nodes and their workloads.

Instructing Kubernetes to create a workload starts with an API call to the Control Plane. The Control Plane then determines the Node(s) that your containers should be scheduled to. No matter how many Nodes you have, there'll only ever be one Control Plane within your cluster.

Role of the Control Plane

More broadly, the Control Plane is responsible for the global management of your cluster. Any operation that could affect multiple Nodes or the cluster infrastructure will be managed by the Control Plane.

The Control Plane consists of several independent components. Together, they're responsible for managing cluster configuration, running and scaling workloads, and reacting to events within the cluster (such as a Node running out of memory).

The core of the Control Plane is kube-apiserver. This component provides the Kubernetes HTTP API that you consume through tools like Kubectl and Helm. The API is how you interact with your cluster. It's also used by other cluster components, such as Node worker processes, to relay information back to the Control Plane.

Resources in your cluster---such as Pods, Services, and Jobs---are managed by individual "controllers." Controllers monitor their resources for healthiness and readiness. They also identify changes that have been requested and then take steps to migrate the current state into the newly desired state.

Controllers are managed in aggregate by kube-controller-manager. This Control Plane component starts and runs the individual controllers. This process will always be running. Were it ever to stop, changes made through the API server would not be identified, and no state changes would ever occur.

Another critical Control Plane component is kube-scheduler. The scheduler is responsible for assigning Pods to Nodes. Scheduling usually requires consideration of several different parameters, such as the current resource usage of each Node and any constraints you've enforced in your manifest.

The scheduler will assess each Node's suitability and then delegate the Pod to run on the most appropriate Node. If Node availability changes or more replicas of a Pod are requested, the scheduler will take action to reschedule the workload accordingly.

The entire Control Plane usually runs on a single Node within the cluster. It is technically possible to span the Control Plane across multiple Nodes. This helps to maximize its availability.

Ordinarily, loss of the Control Plane leaves you unable to manage your cluster, as the API and scheduling functions go offline. Pods on worker Nodes will keep running, though---they'll periodically attempt reconnection to the Control Plane.

Communication Between Nodes and the Control Plane

Kubernetes maintains a two-way communication channel between Nodes and the Control Plane.

Communication is necessary so that the Control Plane can instruct Nodes to create new containers. In the opposite direction, Nodes need to feed data about their availability (such as resource usage statistics) back to the Control Plane. This ensures that Kubernetes can make informed decisions when scheduling containers.

All worker Nodes run an instance of kubelet. This is an agent utility responsible for maintaining communication with the Kubernetes Control Plane. Kubelet also continually monitors the containers that the Node is running. It'll notify the Control Plane if a container drops into an unhealthy state.

When a Node needs to send data to the Control Plane, Kubelet connects to the Control Plane's API server. This uses the same HTTPS interface that you connect to through tools like kubectl. Kubelet is preconfigured with credentials that allow it to authenticate to Kubernetes.

Traffic from the Control Plane to Nodes is again handled using kubelet. Kubelet exposes its own HTTPS endpoint that the Control Plane can access. This endpoint accepts new container manifests, which kubelet then uses to adjust the running containers.

What Else Do Nodes Run?

Kubelet isn't the only binary that a Kubernetes Node must run. You'll also find an instance of kube-proxy on each Node. This is responsible for configuring the Node's networking system to meet the requirements of your container workloads.

Kubernetes has the concept of "services," which expose multiple Pods as a single network identity. It's kube-proxy that converts service definitions into the networking rules that provide the access you requested.

kube-proxy configures the operating system's networking infrastructure to expose services created by kubelet. Traffic forwarding is handled either by the OS-level packet filtering layer or by kube-proxy itself.

Besides kubelet and kube-proxy, Nodes also need to have a container runtime available. The container runtime is responsible for pulling images and actually running your containers. Kubernetes supports any runtime implementing its Container Runtime Interface specification. Examples include containerd and CRI-O.

Conclusion

Kubernetes involves a lot of terminology. Breaking clusters down into their constituent parts can help you appreciate how the individual components interlink.

The Control Plane sits above all the Nodes and is responsible for managing the cluster's operations. The Nodes are best viewed as equals directly below the Control Plane. There is continuous back-and-forth communication between the Nodes and the Control Plane. You, as a user, only interact with the Control Plane via the API server.

The Control Plane is therefore the hub of your cluster. You don't usually engage with Nodes directly. Instead, you send instructions to the Control Plane, which then creates appropriate schedules to fulfill your request. Workloads only get scheduled to Nodes when the Control Plane sends a container manifest to one of the available kubelet instances.

When a Node receives a new manifest, it will use its container runtime to pull the appropriate image and start a new container instance. kube-proxy will then modify the networking configuration to set up services and make your workload accessible. Kubelet relays data about the Node's health back to Kubernetes, enabling it to take action to reschedule Pods if Node resources become constrained.