EBS is a block storage service offered by AWS. If you're running an EC2 instance, you're definitely using it, as it serves as the storage disk for your server. However, it's not safe from failures, and you should still do regular backups.

"Fault-Tolerant" Does Not Mean Safe

Of course, EBS is fairly fault tolerant on the backend. AWS aren't a bunch of savages running a JBOD array; they've planned for single drive failures, so a single bad drive isn't going to take down your server.

However, EBS failures can and do happen, as EBS volumes have an annual failure rate (AFR) of between 0.1%-0.2%. This isn't a lot, and it's very low compared to a single hard drive's ~4%, but it's not nothing. You're not likely to have your EBS volume just fail on you, but if you're running tons of them, there's a chance you might run into a few issues here and there.

The easy fix, of course, is doing backups. EBS provides a great tool for this---the snapshot feature. You can create a snapshot, which acts as a backup stored in S3, which is much more durable. In the event of an EBS failure, you can restore from backup. You don't have to automate this yourself, as EBS Lifecycle Manager can handle it for you, but it isn't enabled by default. You'll, of course, need to pay the additional storage costs associated with storing data in S3, but it is cheaper than EBS.

AWS doesn't try to hide this fact, and recommends doing regular snapshot backups. Most people will also recommend doing backups in general, but it's easy to get caught up in the magic of the cloud and forget this fact. At the end of the day, it's just someone else's computer, and can fail like any other. An extreme example of this is in September 2019, when an AWS US-EAST-1 datacenter had a power outage and generator failure, taking out the EBS servers and the data with it.

The primary drive behind high-availability architecture and cloud computing in general is making sure that when isolated failures inevitably happen, it doesn't take down the whole application. You should still take steps to prevent failures in the first place, but sometimes, like with hard drives, it's a hardware problem, not something you can fix with code.

S3, on the other hand, is very secure, with 99.999999999% of durability (that's eleven nines). If you store 10,000,000 objects in S3, you can on average expect to incur a loss of a single object once every 10,000 years. This is because unlike EBS, S3 is fully replicated across a minimum of three availability zones, and constantly monitored for drive failures within each zone. Even if an entire datacenter goes up in flames, your S3 buckets and the snapshots in them, should still be safe.

How Do EBS Snapshots Work?

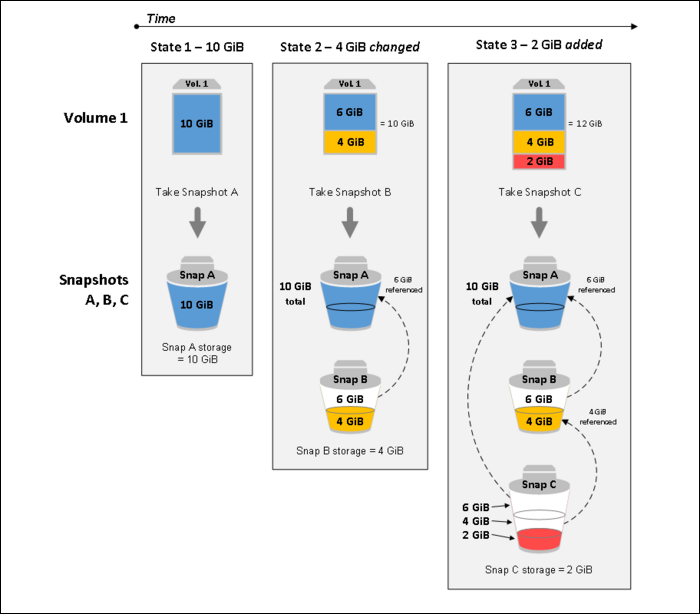

EBS snapshots are incremental backups. Each subsequent backup will only store the data that has changed, so you won't rack up crazy storage costs doing regular snapshots.

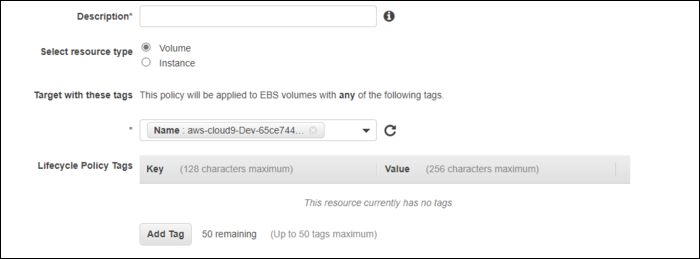

Turning them on is fairly simple. From the EC2 Console, head over to Elastic Block Store > Lifecycle Manager in the sidebar, and create a new policy.

You'll need to specify a tag for this policy to apply to. This can be the name of a single EBS volume, or a blanket tag that applies to everything.

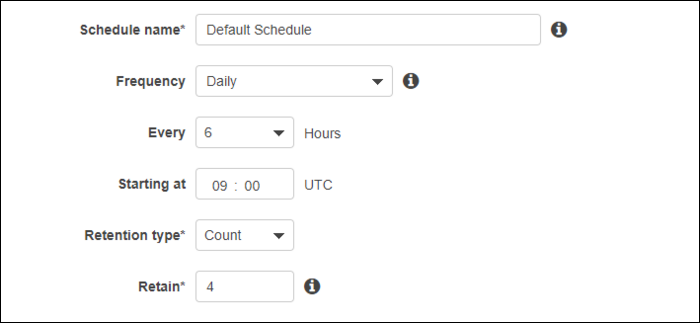

You can set the schedule for this policy as well as the policy for snapshot retention. You don't usually need to be keeping extended backups, so a handful of them depending on the snapshot frequency should be fine.

If you're serious about high availability, you can also enable Fast Snapshot Restore, which will make restoration entirely instant. However, it's pretty expensive, so this isn't something everyone should enable.