Quick Links

CPUs have been getting faster over the years thanks to ever-smaller components. But as we're heading towards the limit of how small circuits can get, where do we go? One answer is to make your chips "wafer-scale" in size.

What's "Wafer-Scale"?

Integrated circuit devices such as CPUs are created from silicon crystals. To create a device, a huge cylindrical silicon crystal is sliced into circular wafers. Multiple chips are then etched into the surface of the wafer. Once the chips are done, they're tested to find defective units, and those are marked.

Working chips are cut out of the wafer and packaged as final products to be sold. The "yield" is the number of working chips you get out of a wafer. Any part of the wafer that's wasted due to failure in the chips or because it's an off-cut, has to be recouped by the money made from working chips.

A wafer-scale chip uses the whole wafer for a single processor. It sounds like a great idea, but there have been a few serious problems.

Wafer-Scale Chips Seemed Impossible

There have been a few attempts at "integrating" an entire silicon wafer over the years. The problem is that the process used to make microchips is imperfect. On any completed wafer, there are bound to be flaws.

If you've printed multiple copies of the same chip on a wafer, then a few broken ones aren't the end of the world. However, a single CPU has to be flawless in order to work. So if you tried to integrate the entire wafer, those unavoidable flaws would make the entire giant chip useless.

To get around this issue, engineers had to rethink how to design a massive processor that's meant to work as an integrated unit. So far only one company has managed to make a working wafer-scale processor and they had to solve serious technical issues to make it happen.

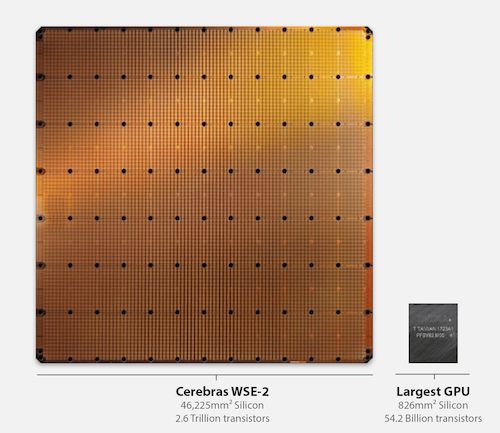

The Cerebras WSE-2

Cerebras Systems' Wafer-Scale Engine 2 is an absolutely massive chip. It uses a 7nm process, which is similar to 7 and 5-nanometer chips that are in various devices such as smartphones, laptops, and desktop computers.

The WSE-2 is designed as a mesh of cores that are all connected to one another by a massive grid of high-speed interconnections. This network of processor core modules can all communicate, even if some cores are defective. The WSE is designed in such a way that there are more cores than advertised, in line with the expected yield from each wafer. This means that, while every chip has defects on it, they don't affect the designed performance at all.

The WSE-2 is designed specifically to accelerate AI applications that use a machine learning technique known as "deep learning". Compared to current supercomputers used for deep learning tasks, the WSE-2 is orders of magnitude faster, while using less power.

The Advantages of Wafer-Scale CPUs

Wafer-scale CPUs solve many of the problems with the current supercomputer design. Supercomputers are built from many smaller simpler computers that are networked together. By carefully designing tasks for this type of design, it's possible to add all of that computing power together.

However, each computer in that supercomputer array needs its own supporting components, and increasing the distance between the many individual CPU packages in that network introduces many performance issues and limits the types of workloads that can be done in real-time.

A wafer-scale CPU effectively combines the processing power of dozens or hundreds of computers into a single integrated circuit, driven by one power supply, all housed in a single chassis. Even better, you can still network multiple wafer-scale computers together to create a traditional supercomputer, but exponentially faster.

Wafer-Scale CPUs For the Rest of Us?

We're unlikely to get any sort of wafer-scale product for regular users who aren't trying to build a supercomputer, but there are elements of the "bigger is better" philosophy evident in consumer electronics too.

A great example is Apple's M1 Ultra system-on-a-chip (SoC), which is two M1 Max SoCs connected by a high-speed interconnect, which presents as a single system with twice the resources.

AMD's CPU designs have also taken advantage of "chiplets", which are CPU core units that can be made independently and then "glued" together using another type of high-speed interconnect. Now that circuits may stop getting smaller on CPUs, the time has come to build them out and perhaps even upwards, with complex 3D circuit designs, rather than the more common 2D circuits we use today.