Quick Links

The Interplanetary File System (IPFS) is a distributed, peer-to-peer file-sharing network that is well-positioned to become the underpinning of a new, decentralized web. Here's how it works, and how you can start using it.

A Decentralized Internet

Even though it is global, the world wide web is still a centralized network. The data storage behind the internet is predominantly servers---physical or virtual---in massive server farms or cloud platforms. These facilities are owned by a single company. The servers are owned or rented by other companies and configured and exposed to be accessible to the outside world.

Anyone wanting to access the information on those servers must make an HTTPS connection from their browser to the appropriate server. The server is at the center, serving all the requests for access to the data that it holds.

This is a simplification of course, but it does describe the general model. To allow for scaling and to provide robustness, organizations can bring mirror servers and content delivery networks into play. But even then, there's still a relatively small and finite number of locations that people can go to in order to access those files.

IPFS is an implementation of a decentralized network. One of the most popular decentralized systems is Git, the version control software. Git is a distributed system because every developer who has cloned a repository has a copy of the entire repository, including the history, on their computer. If the central repository is wiped out, any copy of the repository can be used to restore it. IPFS takes that distributed concept and applies it to file storage and data retrieval.

IPFS was created by Juan Benet and is maintained by Protocol Labs, the company he founded. They took the decentralized nature of Git and the distributed, bandwidth-saving techniques of torrents and created a filing system that works across all of the nodes in the IPFS network. And it's here now, and working.

How the IPFS Works

The IPFS decentralized web is made up of all the computers connected to it, known as nodes. Nodes can store data and make it accessible to anyone who requests it.

If someone requests a file or a webpage, a copy of the file is cached on their node. As more and more people request that data, more and more cached copies will exist. Subsequent requests for that file can be fulfilled by any node---or combination of nodes---that has the file on it. The burden of delivering the data and fulfilling the request is gradually shared out amongst many nodes.

This calls for a new type of web address. Instead of address-based routing where you have to know the location of the data and provide a specific URL to that data, the decentralized web uses content-based routing.

You don't say where the data is; you request what you want, and it is found and retrieved for you. Because the data is stored on many different computers, all of those computers can feed parts of the data to your computer at once, like a torrent download. This is intended to lower latency, reduce bandwidth, and avoid bottlenecks caused by a single, central, server.

Moving away from the centralized model means there is no focal point for hackers to attack. But the immediate concern for most people will be the idea that their files, images, and other media will be stored on other people's computers.

It's not quite like that. IPFS isn't something you connect to and upload to. It's not a distributed, communal Dropbox. It's something that you participate in, by hosting a node or paying to use a professionally-provisioned node hosted by a cloud service. And unless you choose to share or publish something, it isn't going to be accessible to anyone else. In fact, the term "uploading" is misleading. What you're really doing is importing files into your own node.

If you want a file to be accessible to others but need to keep the content restricted to a select few, you should encrypt it before you import it. The transmission of data is encrypted in both directions, but imported files are purposefully not encrypted by default. This leaves the choice of encryption technology up to you. IPFS doesn't push a form of file storage encryption as the "official" encryption.

How Data Is Stored

Data is stored in chunks of 256 KB, called IPFS objects. Files larger than that are split into as many IPFS objects as it takes to accommodate the file. One IPFS object per file contains links to all of the other IPFS objects that make up that file.

When a file is added to the IPFS network it is given a unique, 24-character hash ID, called the content ID, or CID. That's how it is identified and referenced within the IPFS network. Recalculating the hash when the file is retrieved verifies the integrity of the file. If the check fails, the file has been modified. When files are legitimately updated, IPFS handles the versioning of files. That means the new version of the file is stored along with the previous version. IPFS operates like a distributed file system, and this concept of versioning provides a degree of immutability to that file system.

Let's say you store a file in IPFS on your node, and someone called Dave requests it and downloads it to their node. The next person that asks for that file might get it from you, or from Dave, or in a torrent-like way with parts of the file coming from your node and from Dave's node. The more people who download the file, the more nodes there are to chip in and help with subsequent file requests.

Garbage collection will periodically remove cached IPFS objects. If you want to permanently store a file you can pin it to your node. That means it won't be cleaned out during garbage collection. You can pay for storage on cloud storage providers that expose your data to the IPFS network and keep them permanently pinned, and there are services specifically tailored to hosting websites that are IPFS accessible.

If something on your website goes viral and drives massive waves of traffic to your website, the pages will be cached in all the nodes that retrieve those pages. Those cached pages will be used to help service further page requests, helping you ride the wave and satisfy demand.

Of course, all of this depends on a sufficient number of nodes being on and available, and with enough pinned and cached data. And that requires participants.

How to Install IPFS

Windows users can download and run the EXE file found on the IPFS release page. If you're on a Mac, download the DMG file and drag it into Applications as you normally would. If you run into trouble, check out the official documentation.

For demonstration purposes, we'll walk through the installation on Ubuntu. There are Snap packages available for IPFS and for the IPFS desktop client on any Linux distribution. If you just install IPFS you'll have a fully working IPFS node that you can control and administer using a browser. If you install the desktop client you don't need to use the browser, the client provides all of the same functionality.

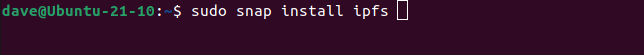

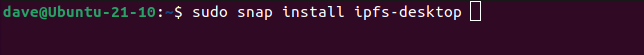

To install the Snaps use:

sudo snap install ipfs

sudo snap install ipfs-desktop

Now you need to run the command to initialize your node.

ipfs init

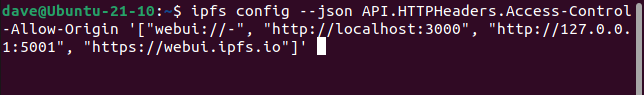

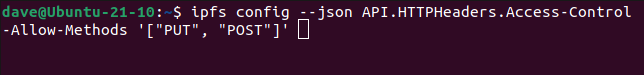

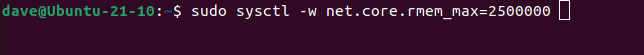

The following commands are suggested by IPFS if you run into difficulties and the daemon doesn't run, or you can't connect to it. On all the test computers we tried these were required, so you might as well go ahead and issue them now:

ipfs config --json API.HTTPHeaders.Access-Control-Allow-Origin '["webui://-", "http://localhost:3000", "http://127.0.0.1:5001", "https://webui.ipfs.io"]'

ipfs config --json API.HTTPHeaders.Access-Control-Allow-Methods '["PUT", "POST"]'

sudo sysctl -w net.core.rmem_max=2500000

With those out of the way, you can start the IPFS daemon.

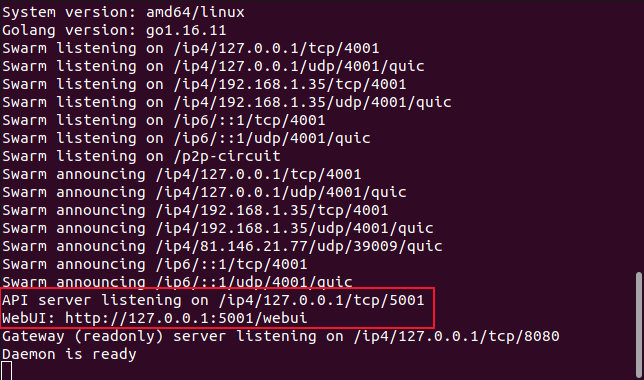

ipfs daemon

When the daemon launches it reports the two addresses you can use to connect to it. One is for the IPFS desktop and the other is for the IPFS "webui" or web user interface.

The Web Interface

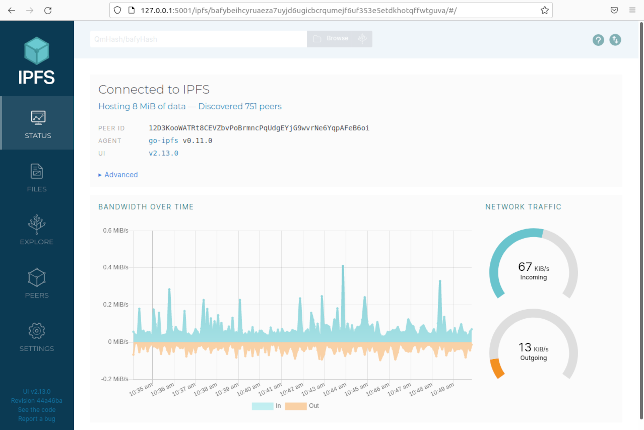

Paste the webui address http://127.0.0.1:5001/webui into your browser to connect to the IPFS web front end.

The default page is the "Status" screen. This is a dashboard showing the status and activity of your node. It shows the size of the files you're hosting, plus the total size of the cached IPFS objects your node is hosting. This is data from elsewhere in the IPFS network. The dashboard also displays two real-time gauges showing inbound and outbound IPFS traffic, and a real-time graph showing the history of that traffic.

To change to a different screen, click one of the icons in the left-hand sidebar. The "Files" screen lets you see the files you've imported into IPFS. You can use the blue "Import" button to search for files or folders on your computer that you want to import into IPFS.

IPFS makes use of Merkle trees. These are a very efficient superset of binary hash trees, invented in 1979 by Ralph Merkle. If you have a lot of trees, you have a forest. The "Explore" icon opens a screen that lets you browse through different types of information stored within IPFS and its Merkle forest.

There's an archive of cartoons from the well-known XKCD website. Clicking that option and selecting a cartoon delivers your chosen cartoon to you over IPFS.

The "Peers" icon opens a world map that plots where your IPFS connections are located around the globe.

Within a few minutes, we had connections from Australia, Belarus, Belgium, Canada, China, Finland, France, Germany, Japan, Malaysia, Netherlands, Norway, Poland, Portugal, Romania, Russia, Singapore, South Korea, Sweden, Taiwan, Turkey, the United Kingdom, and of course, the USA.

Proof positive, if any were needed, that IPFS has generated a global buzz. You won't connect to every available node, of course. That would be inefficient.

The IPFS Desktop Client

Find IPFS Desktop in your system's application launcher. On GNOME, with the IPFS daemon stopped, press your "Super" key and type "ipfs." You'll see the blue IPFS cube icon.

Click this icon and the desktop client will start. It will start the daemon itself.

The desktop client's look and functionality are exactly the same as the web interface, but this time it is running as a stand-alone application.

One additional feature the application provides is an app-indicator in the notification area.

This gives you quick access to a menu of options and a traffic light indicator of the status of your node. The indicator is green for normal running, red for an error, and yellow for starting up.

What Comes Next?

Nothing is going to suddenly replace the existing, centralized web, but over time things will evolve. Perhaps the IPFS is a glimpse of what it might evolve into.