Quick Links

If you've set up a TV set or computer monitor recently, you've probably come across HDMI ports. But what are they---and how do they differ from other video connectors and standards? We'll explain.

A High-Definition Digital Media Standard

HDMI stands for "High-Definition Multimedia Interface." It's a digital video connection standard that helps you get clean HD video on TV sets and computer monitors. At the moment, it's typically the way you get the highest-quality video connection between devices such as computers, set-top boxes, game consoles, DVRs, Blu-Ray or DVD players, and monitors or TV sets.

HDMI is a standard governed by the HDMI Forum, which is a group of over 80 companies that vote to determine how HDMI works today---and how new extensions of the standard will work in the future.

HDMI can carry both a video and an audio signal, making it a convenient one-plug solution for high-definition digital video. (Previous digital video standards such as DVI did not support audio and required a separate cable.)

Different Types of HDMI

HDMI can get a little confusing. There are four major sizes of HDMI connectors: Type A (Standard), Type B (Dual-Link, uncommon), Type C (Mini), and Type D (Micro). Within those, there are at least seven major specification revisions that support different features and resolutions:

- HDMI 1.0: Released in 2002, it was the first HDMI specification. It supported a maximum resolution of 1080p at 60 Hz.

- HDMI 1.1: Released in 2004, it added support for the DVD Audio standard.

- HDMI 1.2: Released in 2005, it added support for One Bit Audio, used by Super Audio CDs. Later that same year, HDMI 1.2a fully specified Consumer Electronics Control (CEC) features. (CEC allows devices to control each other when they're connected via HDMI, such as turning a TV off when you turn off a Blu-Ray player.)

- HDMI 1.3: Released in 2006, it was a significant update that added support for higher video resolutions and frame rates (1080p at 120 Hz and 1440p at 60 Hz), higher color depths (up to 48-bit, or 16 bits per color channel), and higher audio sample rates (up to 192 kHz). It also added support for the Deep Color and xvYCC extended color spaces.

- HDMI 1.4: Released in 2009, it added support for 3D video, Ethernet over HDMI, and 4K video resolutions (up to 4096x2160 at 24 Hz).

- HDMI 2.0: Released in 2013, this release (sometimes called "HDMI UHD") added support for 4K video at higher frame rates (up to 4096x2160 at 60 Hz). It also doubled the maximum bandwidth to 18 Gbps.

- HDMI 2.1: Released in 2017. It adds support for 8K video at 120Hz and 4K video at 120Hz, as well as resolutions up to 10K at 120 Hz. It also supports a new feature called Dynamic HDR, which can adjust the HDR settings on a frame-by-frame basis. The maximum bandwidth is 48 Gbps.

- HDMI 2.1a: Scheduled for release in 2022. It's a relatively minor update that adds Source-Based Tone Mapping (SBTM).

If that weren't confusing enough, HDMI cables are also available in different grades, indicated by speed ratings such as "Standard," "High-Speed," or "Ultra High-Speed." The speed rating indicates the maximum bandwidth the cable can carry.

For example, a "Standard" HDMI cable can carry up to 1080p video at 60Hz, while a "High-Speed" HDMI cable can carry 1080p video at 120Hz or 4K video at 30Hz. You'll need to use a "High-Speed" or "Ultra High-Speed" HDMI cable for resolutions above 1080p or frame rates above 60Hz.

Should I Use HDMI or Another Connector?

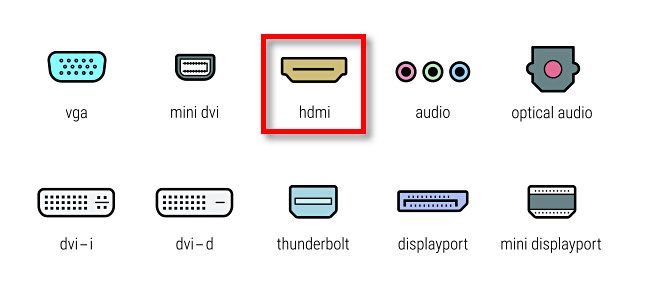

Whether you use HDMI or not depends on the application. Prior to HDMI, most people connected video devices to their TV sets or monitors using analog video connections such as component video, composite video, VGA, or even an RF antenna jack.

Generally speaking, for modern video applications, digital video connections provide sharper, clearer video quality than analog video connections. So if you have a choice between HDMI and an older analog standard, you'll probably want to pick HDMI.

With TV sets, HDMI is usually your best option. If you're using a computer, you might have a choice between HDMI and newer standards such as USB-C, Thunderbolt, or DisplayPort. In that case, your choice becomes more complicated. In that case, your best choice might correspond with personal preference, whichever cables you have available, or the maximum resolution supported by any of those connection types.

But if you ever have to choose between HDMI and an older analog standard such as VGA, HDMI is almost always the best way to go. Good luck!