Quick Links

If you're shopping for a new 4K ultra-high-definition TV, it almost certainly supports high-dynamic-range (HDR) video. But what's the difference between the competing HDR formats? Should you factor this into your purchase?

What Is HDR Video?

HDR stands for high dynamic range. It refers to the visual presentation of movies, TV shows, video games, or images. In essence, HDR provides a better, brighter image with more detail than a standard definition video or image.

Dynamic range is the term used to describe the amount of visible detail between the brightest whites and the darkest blacks. The higher the dynamic range, the more detail is preserved in shadow and highlights. HDR video requires the use of a HDR-capable display that's able to reach a much higher peak brightness than a a standard SDR television.

Dynamic range is measured in stops, a photographic term commonly associated with light value. While SDR displays are capable of displaying between 6 and 10 stops, HDR displays can display at least 13 stops with many exceeding 20. This means more detail on-screen, and more detail preserved in highlights and shadows, not just mid-tones.

HDR video also uses 10-bit color as a baseline (with some standards supporting up a 12-bit color space). As a result, HDR video uses the expanded Rec. 2020 color gamut which covers around 75% of the visible color spectrum. By comparison the Rec. 709 standard used in SDR content covers around 36% of the visible spectrum.

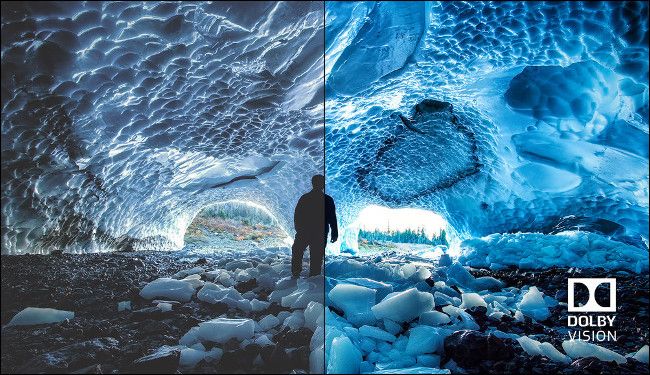

More colors on screen and a much higher peak brightness makes for a more realistic and immersive viewing experience. This doesn't necessarily mean every scene will be much brighter or more saturated than SDR video. Individual elements like the sun, or the flash of an explosion will benefit from added peak brightness, while more variations in color make for a more lifelike image.

To really understand how much better HDR video is over SDR, you'll need to see it for yourself.

HDR10: The "Standard" Implementation

HDR10 is the baseline standard on most HDR-compliant TVs. If you buy a 4K Ultra-HD Blu-ray with an "HDR" sticker on it, there's a good chance it will be presented in HDR10. This has led to HDR10 becoming somewhat of a "compatibility mode" that most modern TVs can fall back on.

Content produced for HDR10 is mastered at up to 1,000 nits of peak brightness. It uses static metadata to define average frame light levels and maximum brightness, which means that average and maximum light values don't vary from scene-to-scene. Although HDR10 is one of the more basic HDR formats, it can still look significantly better than SDR content.

Since HDR10 is an open format, it also has a wide range of support from both TV and monitor manufacturers and content producers. As a result, you'll find HDR10 content everywhere, including lots of free videos on YouTube. Although standards for HDR gaming are still emerging, consoles and Windows use HDR10 to deliver games in high dynamic range, as well.

HDR10+: Improved HDR with Dynamic Metadata

HDR10+ is another open standard, but it's one produced by Samsung and Amazon Video. It improves on HDR10 by using dynamic metadata that can adjust luminance on a per-scene or frame-by-frame basis. Content produced in HDR10+ is currently mastered at up to 4,000 nits of peak brightness. Dynamic metadata helps preserve detail in highlights and shadows.

Unfortunately, HDR10+ doesn't take into account the capabilities of the device on which it is being displayed (just like regular HDR10). This limitation has been addressed in other standards, notably Dolby Vision. When certain scenes exceed the capabilities of the display, it's up to the display itself to decide how to tone-map the image. This can vary depending on the display.

One of the biggest problems with HDR10+ is a lack of availability. Presently, Samsung is the only big-name manufacturer to go all-in on it, although, there's been limited support from Panasonic, Vizio, and Oppo. Content is also sparse---at this writing, only Amazon Video offers streaming content in HDR10+.

Dolby Vision: A Proprietary Format with Dynamic Metadata

Dolby Vision is a direct competitor of HDR10+, and it shares many similarities from a technical standpoint. Current Dolby Vision content is mastered at a brightness of up to 4,000 nits, with support up to 10,000 nits, 8K resolution, and 12-bit color in the future. It also uses dynamic metadata for scene-by-scene adjustments to improve overall picture quality.

One significant benefit over HDR10+ is that Dolby Vision takes into account the capabilities of the display when presenting content. This can result in a viewing experience that is closer to the creator's intent, regardless of how bright or dark the display can get.

Because Dolby Vision is a proprietary format, TV manufacturers have to pay to implement it. It's mostly found on high-end TVs, but it's been widely adopted by LG, Sony, TCL, Hisense, Panasonic, and Philips. Samsung is the only notable manufacturer to have shunned Dolby Vision entirely in favor of HDR10+.

If you really search, there are TVs out there that support all formats. However, HDR10+ is noticeably harder to find than Dolby Vision. There's also a lot more content available in Dolby Vision. Many Netflix and Disney+ shows are produced in Dolby Vision, with support for some shows on services like Amazon Prime Video and VUDU.

There's also support for Dolby Vision in the Xbox Series X and Series S, which promise to deliver the first Dolby Vision gaming experiences in 2021. We'll have to wait to see how that pans out, but it's something to keep in mind if you'll be buying a next-gen Xbox any time soon.

Hybrid Log-Gamma: The Broadcast Standard

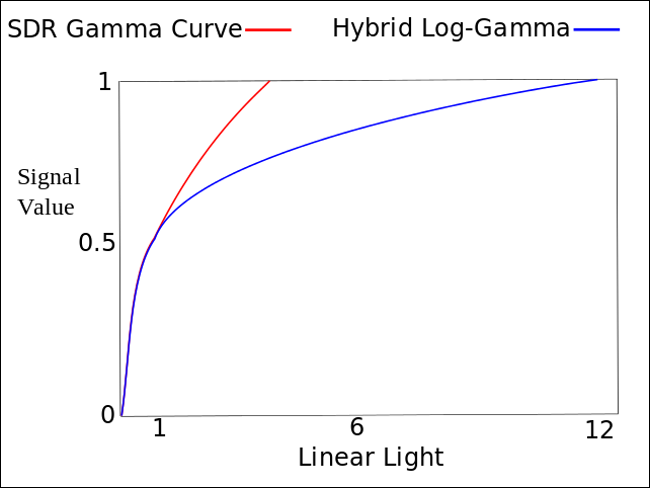

Broadcast standards evolve differently than production standards, but that doesn't mean sticking with SDR forever. Hybrid Log-Gamma (HLG) is an open-broadcast format developed by the BBC in the U.K. and NHK public in Japan. It's a backward-compatible format that implements HDR video over broadcast. HLG specifically targets a peak brightness of 1,000 nits, like HDR10.

Because broadcasts have to account for such a wide array of devices with differing abilities, ensuring that modern HDR broadcasts display correctly on older SDR displays is essential. HLG accomplishes this by delivering a signal that allows modern HDR displays to achieve greater dynamic range without closing the door on older technology.

Although this format was created for broadcasts, it's also supported by streaming services, including YouTube and BBC iPlayer. Broadcasters already using HLG include Eutelsat, DirecTV, and Sky U.K.

Advanced HDR by Technicolor: Dead on Arrival

One HDR format that has so far failed to capture an audience is Advanced HDR by Technicolor. Pioneered by LG and Technicolor, the format first appeared around 2016. It made its way into LG televisions until 2019, when the company abruptly removed support for the format from its 2020 lineup. This has effectively killed off the technology (for now).

The primary issue with Technicolor's effort was a lack of content. As of September 2020, we couldn't find a single film for sale mastered in Advanced HDR or any streaming service that supports it.

Which Format Should You Invest In?

If you're buying an HDR TV in 2020 (or beyond), it will support HDR10, which is a huge leap in dynamic range and brightness over standard-definition content. If you've yet to experience HDR10 content, you're in for a treat! To benefit from it, you'll need a TV that gets somewhere close to 1,000 nits of brightness and content mastered to take advantage of it.

Beyond HDR10, Dolby Vision has the widest support among both content producers and television manufacturers. More Blu-rays and streaming services are available in Dolby Vision. The format is also fairly future-proof because we won't see the best it has to offer until display technology matures further. However, both Roku and Google will release streaming boxes that support Dolby Vision this year.

You also have plenty of TVs to choose from that support Dolby Vision, while HDR10+ support is limited mostly to Samsung. Vizio and Hisense produce TVs that support both, but not every model. Also, very few films are mastered in HDR10+ and only Amazon produces streaming content for it.

Because HLG is a broadcast standard, most modern TVs will support it moving forward. Your display doesn't have to support HLG for you to receive broadcasts, though. If you don't watch much network TV or cable, you can put HLG low on your list of priorities.

In most cases, the TV you choose will dictate the standards you can enjoy. Given that, you'll also want to understand the difference between display technologies so you can make an informed choice.