Quick Links

You can extract text from images on the Linux command line using the Tesseract OCR engine. It's fast, accurate, and works in about 100 languages. Here’s how to use it.

Optical Character Recognition

Optical character recognition (OCR) is the ability to look at and find words in an image, and then extract them as editable text. This simple task for humans is very difficult for computers to do. Early efforts were clunky, to say the least. Computers were often confused if the typeface or size was not to the OCR software’s liking.

Nevertheless, the pioneers in this field were still held in high esteem. If you lost the electronic copy of a document, but still had a printed version, OCR could re-create an electronic, editable version. Even if the results weren’t 100 percent accurate, this was still a great time-saver.

With some manual tidying up, you'd have your document back. People were forgiving about the mistakes it made because they understood the complexity of the task facing an OCR package. Plus, it was better than retyping the entire document.

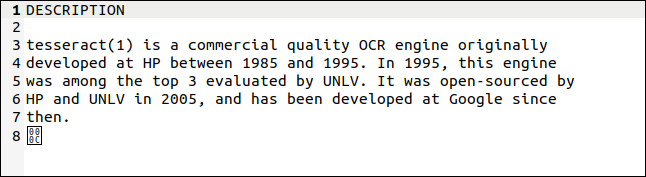

Things have improved significantly since then. The Tesseract OCR application, written by Hewlett Packard, started in the 1980s as a commercial application. It was open-sourced in 2005, and it's now supported by Google. It has multi-language capabilities, is regarded as one of the most accurate OCR systems available, and you can use it for free.

Installing Tesseract OCR

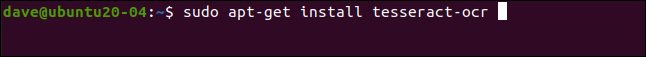

To install Tesseract OCR on Ubuntu, use this command:

sudo apt-get install tesseract-ocr

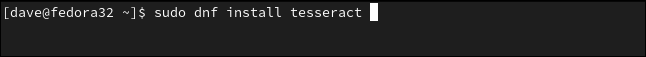

On Fedora, the command is:

sudo dnf install tesseract

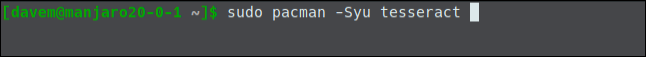

On Manjaro, you need to type:

sudo pacman -Syu tesseract

Using Tesseract OCR

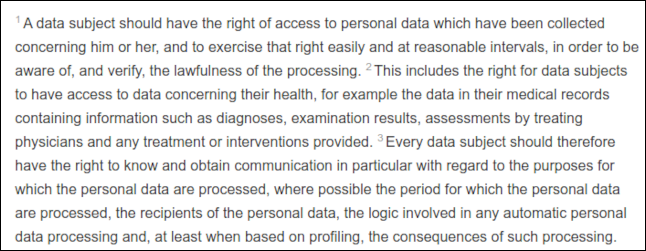

We’re going to pose a set of challenges to Tesseract OCR. Our first image that contains text is an extract from Recital 63 of the General Data Protection Regulations. Let’s see if OCR can read this (and stay awake).

It’s a tricky image because each sentence starts with a faint superscript number, which is typical in legislative documents.

We need to give the tesseract command some information, including:

- The name of the image file we want it to process.

- The name of the text file it will create to hold the extracted text. We don’t have to provide the file extension (it will always be .txt). If a file already exists with the same name, it will be overwritten.

-

We can use the

--dpioption to telltesseractwhat the dots per inch (dpi) resolution of the image is. If we don’t provide a dpi value,tesseractwill try to figure it out.

Our image file is named "recital-63.png," and its resolution is 150 dpi. We’re going to create a text file from it called "recital.txt."

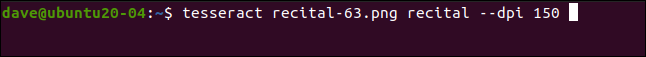

Our command looks like this:

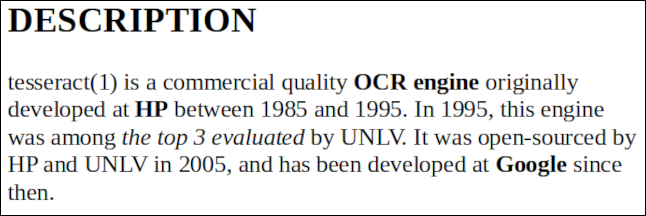

tesseract recital-63.png recital --dpi 150

The results are very good. The only issue is the superscripts---they were too faint to be read correctly. A good quality image is vital to get good results.

tesseract has interpreted the superscript numbers as quotation marks (") and degree symbols (°), but the actual text has been extracted perfectly (the right side of the image had to be trimmed to fit here).

The final character is a byte with the hexadecimal value of 0x0C, which is a carriage return.

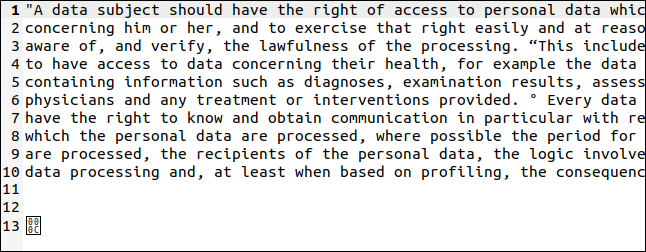

Below is another image with text in different sizes, and both bold and italics.

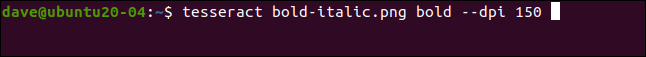

The name of this file is "bold-italic.png." We want to create a text file called "bold.txt," so our command is:

tesseract bold-italic.png bold --dpi 150

This one didn't pose any problems, and the text was extracted perfectly.

Using Different Languages

Tesseract OCR supports around 100 languages. To use a language, you must first install it. When you find the language you want to use in the list, note its abbreviation. We're going to install support for Welsh. Its abbreviation is "cym," which is short for "Cymru," which means Welsh.

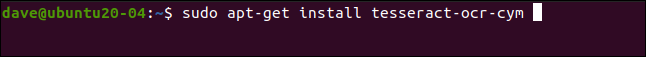

The installation package is called "tesseract-ocr-" with the language abbreviation tagged onto the end. To install the Welsh language file in Ubuntu, we'll use:

sudo apt-get install tesseract-ocr-cym

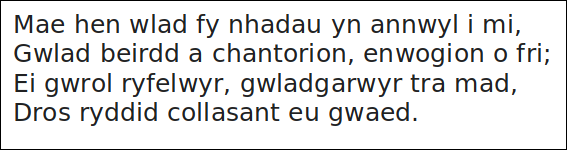

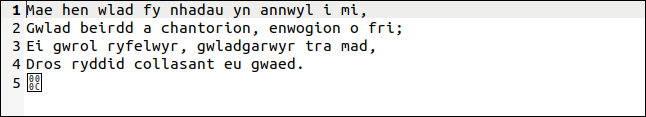

The image with the text is below. It's the first verse of the Welsh national anthem.

Let's see if Tesseract OCR is up to the challenge. We'll use the -l (language) option to let tesseract know the language in which we want to work:

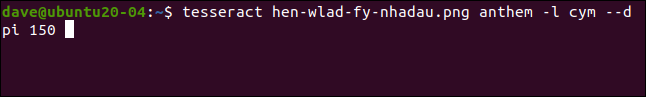

tesseract hen-wlad-fy-nhadau.png anthem -l cym --dpi 150

tesseract copes perfectly, as shown in the extracted text below. Da iawn, Tesseract OCR.

If your document contains two or more languages (like a Welsh-to-English dictionary, for example), you can use a plus sign (+) to tell tesseract to add another language, like so:

tesseract image.png textfile -l eng+cym+fra

Using Tesseract OCR with PDFs

The tesseract command is designed to work with image files, but it's unable to read PDFs. However, if you need to extract text from a PDF, you can use another utility first to generate a set of images. A single image will represent a single page of the PDF.

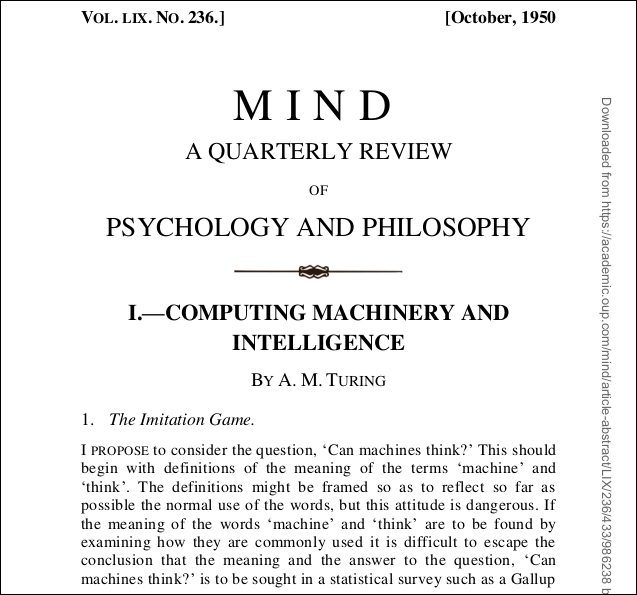

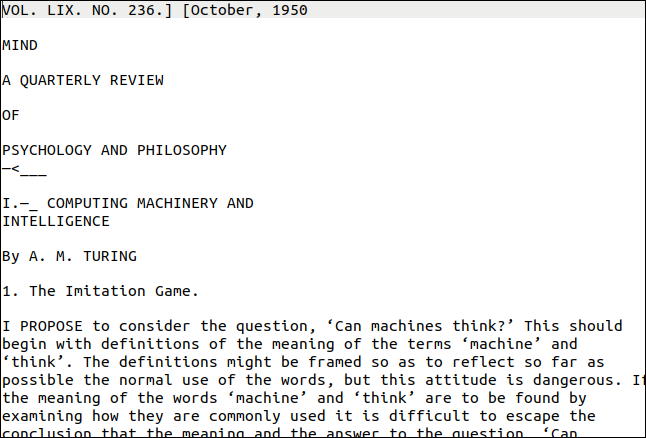

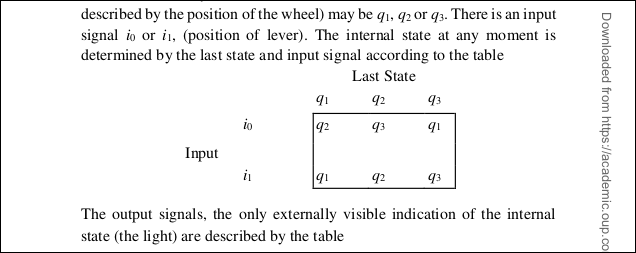

The pdftppm utility you need should already be installed on your Linux computer. The PDF we'll use for our example is a copy of Alan Turing's seminal paper on artificial intelligence, "Computing Machinery and Intelligence."

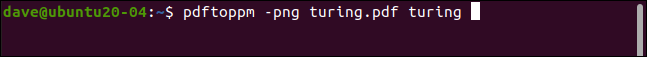

We use the -png option to specify that we want to create PNG files. The file name of our PDF is "turing.pdf." We'll call our image files "turing-01.png," "turing-02.png," and so on:

pdftoppm -png turing.pdf turing

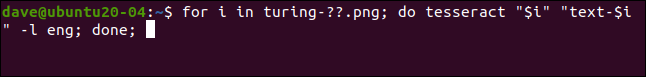

To run tesseract on each image file using a single command, we need to use a for loop. For each of our "turing-nn.png," files we run tesseract, and create a text file called "text-" plus "turing-nn" as part of the image file name:

for i in turing-??.png; do tesseract "$i" "text-$i" -l eng; done;

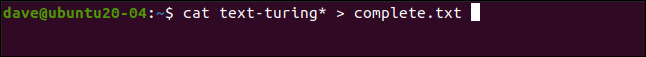

To combine all the text files into one, we can use cat:

cat text-turing* > complete.txt

So, how did it do? Very well, as you can see below. The first page looks quite challenging, though. It has different text styles and sizes, and decoration. There's also a vertical "watermark" on the right edge of the page.

However, the output is close to the original. Obviously, the formatting was lost, but the text is correct.

The vertical watermark was transcribed as a line of gibberish at the bottom of the page. The text was too small to be read by tesseract accurately, but it would be easy enough to find and delete it. The worst result would have been stray characters at the end of each line.

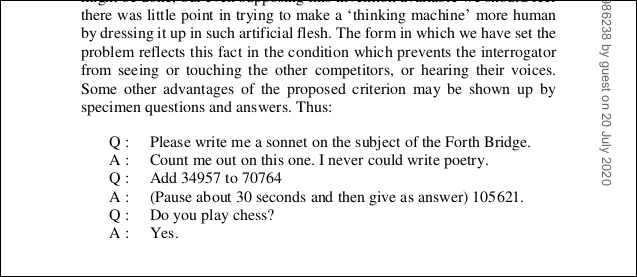

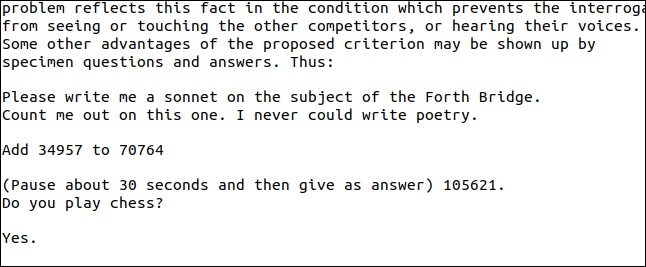

Curiously, the single letters at the start of the list of questions and answers on page two have been ignored. The section from the PDF is shown below.

As you can see below, the questions remain, but the "Q" and "A" at the start of each line were lost.

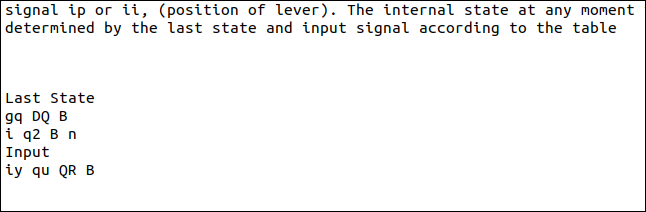

Diagrams also won't be transcribed correctly. Let's look at what happens when we try to extract the one shown below from the Turing PDF.

As you can see in our result below, the characters were read, but the format of the diagram was lost.

Again, tesseract struggled with the small size of the subscripts, and they were rendered incorrectly.

In fairness, though, it was still a good result. We weren't able to extract straightforward text, but then, this example was deliberately chosen because it presented a challenge.

A Good Solution When You Need It

OCR isn't something you'll need to use daily. However, when the need does arise, it's good to know you have one of the best OCR engines at your disposal.