There are lots of tips out there for tweaking your SSD in Linux and lots of anecdotal reports on what works and what doesn't. We ran our own benchmarks with a few specific tweaks to show you the real difference.

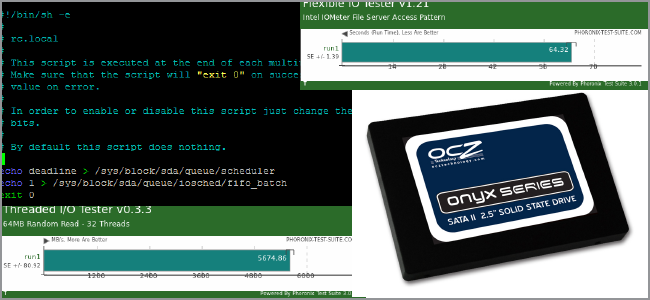

Benchmarks

To benchmark our disk, we used the Phoronix Test Suite. It's free and has a repository for Ubuntu so you don't have to compile from scratch to run quick tests. We tested our system right after a fresh installation of Ubuntu Natty 64-bit using the default parameters for the ext4 file system.

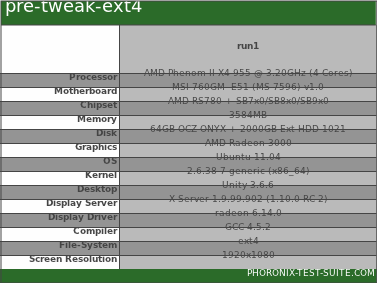

Our system specs were as follows:

- AMD Phenom II quad-core @ 3.2 GHz

- MSI 760GM E51 motherboard

- 3.5 GB RAM

- AMD Radeon 3000 integrated w/ 512MB RAM

- Ubuntu Natty

And, of course, the SSD we used to test on was a 64GB OCZ Onyx drive ($117 on Amazon.com at time of writing).

Prominent Tweaks

There are quite a few changes that people recommend when upgrading to an SSD. After filtering out some of the older stuff, we made a short list of tweaks that Linux distros have not included as defaults for SSDs. Three of them involve editing your fstab file, so back that up before you continue with the following command:

sudo cp /etc/fstab /etc/fstab.bak

If something goes wrong, you can always delete the new fstab file and replace it with a copy of your backup. If you don't know what that is or you want to brush up on how it works, take a look at HTG Explains: What is the Linux fstab and How Does It Work?

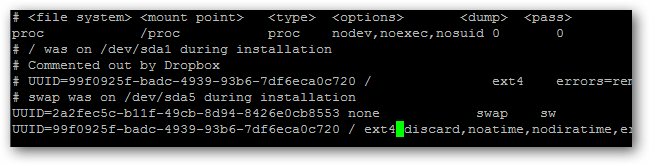

Eschewing Access Times

You can help increase the life of your SSD by reducing how much the OS write to disk. If you need to know when each file or directory was last accessed, you can add these two options to your /etc/fstab file:

noatime,nodiratime

Add them along with the other options, and make sure they're all separated by commas and no spaces.

Enabling TRIM

You can enable TRIM to help manage disk performance over the long-term. Add the following option to your fstab file:

discard

This works well for ext4 file systems, even on standard hard drives. You must have a kernel version of at least 2.6.33 or later; you're covered if you're using Maverick or Natty, or have backports enabled on Lucid. While this doesn't specifically improve initial benchmarking, it should make the system perform better in the long-run and so it made our list.

Tmpfs

The system cache is stored in /tmp. We can tell fstab to mount this in the RAM as a temporary file system so your system will touch the hard drive less. Add the following line to the bottom of your /etc/fstab file in a new line:

tmpfs /tmp tmpfs defaults,noatime,mode=1777 0 0

Save your fstab file to commit these changes.

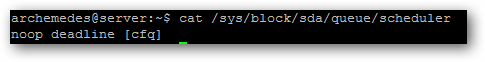

Switching IO Schedulers

Your system doesn't write all changes to disk immediately, and multiple requests get queued. The default input-output scheduler -- cfq -- handles this okay, but we can change this to one that works better for our hardware.

First, list which options you have available with the following command, replacing "X" with the letter of your root drive:

cat /sys/block/sdX/queue/scheduler

My installation is on sda. You should see a few different options.

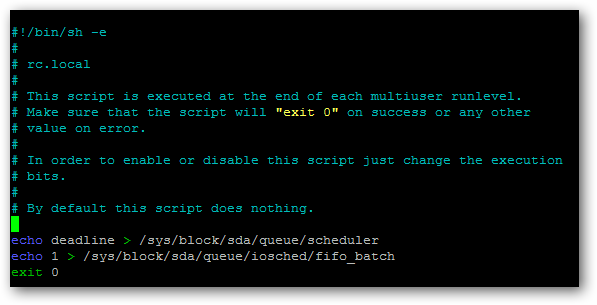

If you have deadline, you should use that, as it gives you an extra tweak further down the line. If not, you should be able to use noop without problems. We need to tell the OS to use these options after every boot so we'll need to edit the rc.local file.

We'll use nano, since we're comfortable with the command-line, but you can use any other text editor you like (gedit, vim, etc.).

sudo nano /etc/rc.local

Above the "exit 0" line, add these two lines if you're using deadline:

echo deadline > /sys/block/sdX/queue/scheduler

echo 1 > /sys/block/sdX/queue/iosched/fifo_batch

If you're using noop, add this line:

echo noop > /sys/block/sdX/queue/scheduler

Once again, replace "X" with the appropriate drive letter for your installation. Look over everything to make sure it looks good.

Then, hit CTRL+O to save, then CTRL+X to quit.

Restart

In order for all these changes to go into effect, you need to restart. After that, you should be all set. If something goes wrong and you can't boot, you can systematically undo each of the above steps until you can boot again. You can even use a LiveCD or LiveUSB to recover if you want.

Your fstab changes will carry through the life of your installation, even withstanding upgrades, but your rc.local change will have to be re-instituted after every upgrade (between versions).

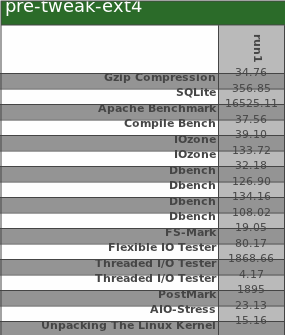

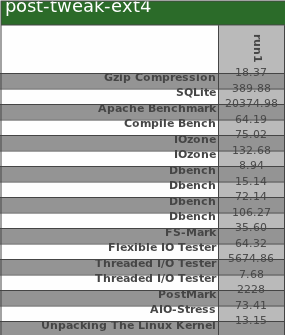

Benchmarking Results

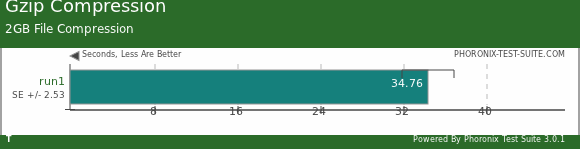

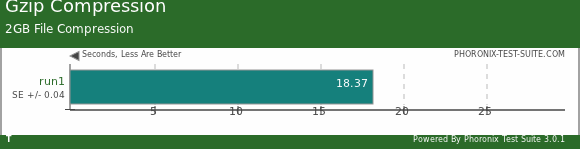

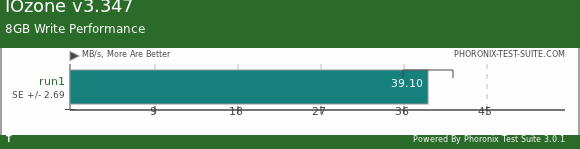

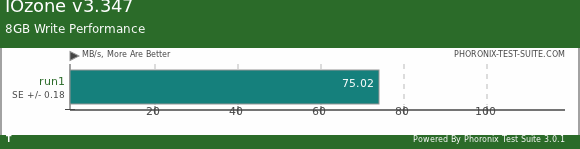

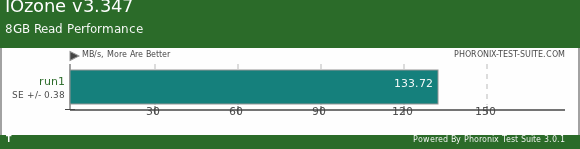

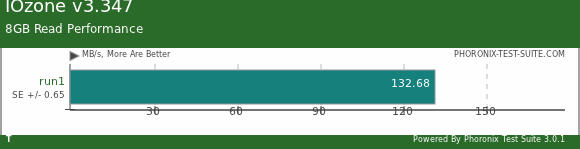

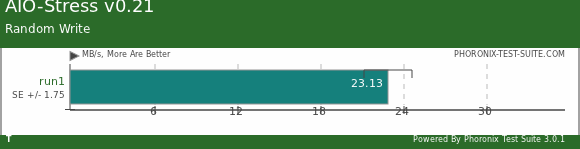

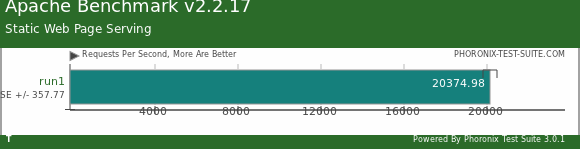

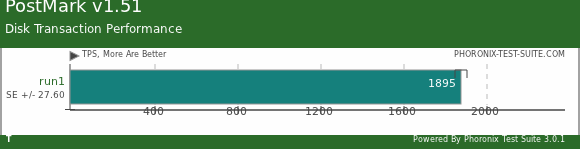

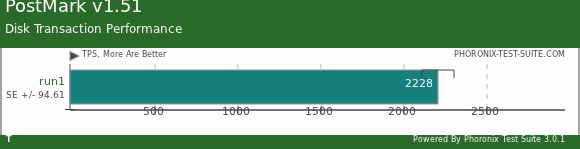

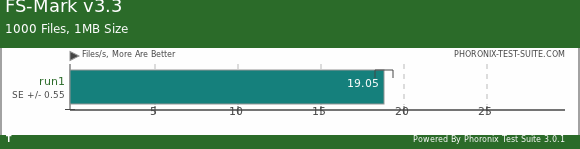

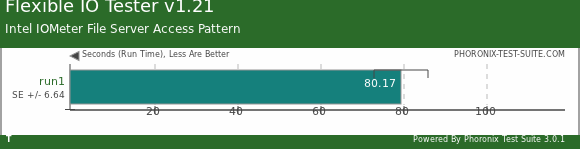

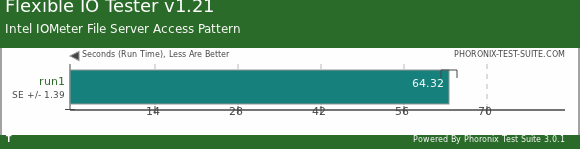

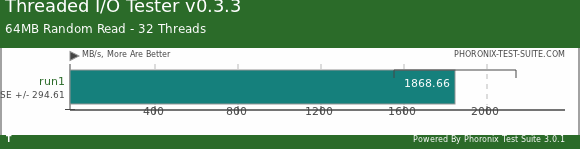

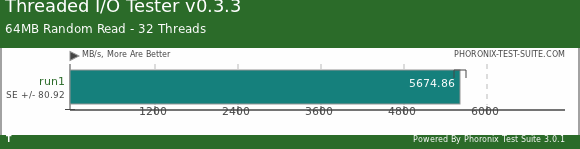

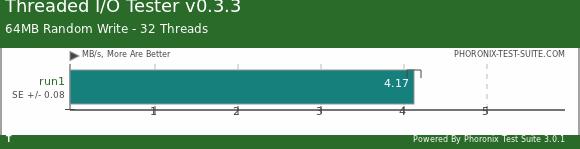

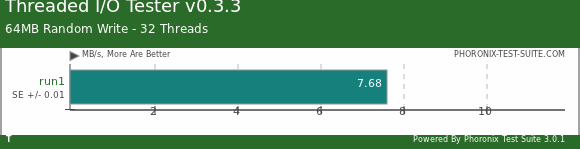

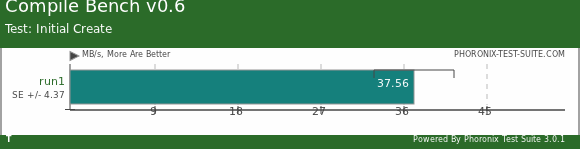

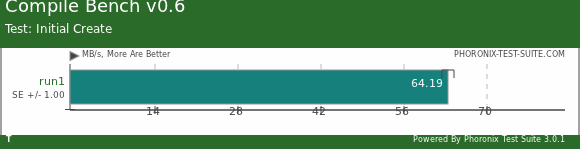

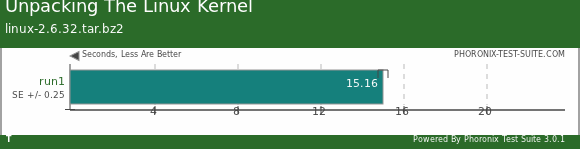

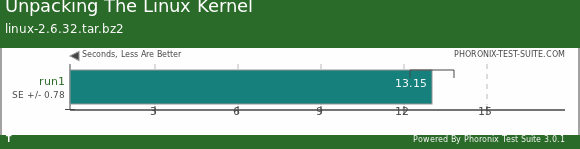

To perform the benchmarks, we ran the disk suite of tests. The top image of each test is before tweaking the ext4 configuration, and the bottom image is after the tweaks and a reboot. You'll see a brief explanation of what the test measures as well as an interpretation of the results.

Large File Operations

This test compresses a 2GB file with random data and writes it to disk. The SSD tweaks here show in a roughly 40% improvement.

IOzone simulates file system performance, in this case by writing an 8GB file. Again, an almost 50% increase.

Here, an 8GB file is read. The results are almost the same as without adjusting ext4.

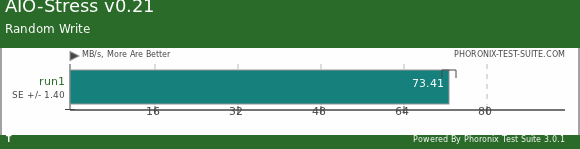

AIO-Stress asynchronously tests input and output, using a 2GB test file and a 64KB record size. Here, there's almost a 200% increase in performance compared to vanilla ext4!

Small File Operations

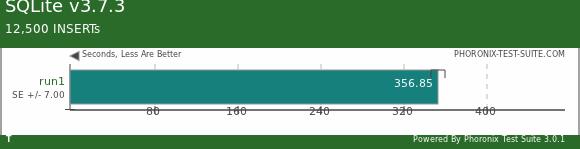

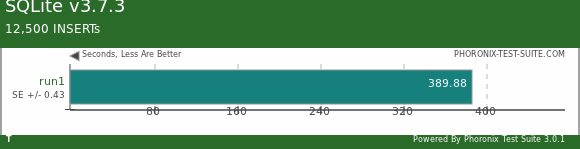

An SQLite database is created and PTS adds 12,500 records to it. The SSD tweaks here actually slowed down performance by about 10%.

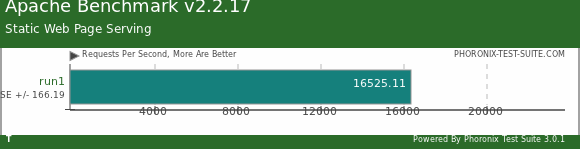

The Apache Benchmark tests random reads of small files. There was about a 25% performance gain after optimizing our SSD.

PostMark simulates 25,000 file transactions, 500 simultaneously at any given time, with file sizes between 5 and 512KB. This simulates web and mail servers pretty well, and we see a 16% performance increase after tweaking.

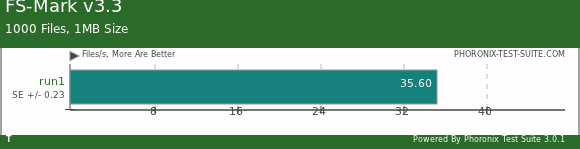

FS-Mark looks at 1000 files with a total size of 1MB, and measures how many can be completely written and read in a pre-ordained amount of time. Our tweaks see an increase, again, with smaller file sizes. About a 45% increase with ext4 adjustments.

File System Access

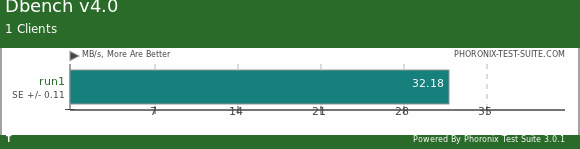

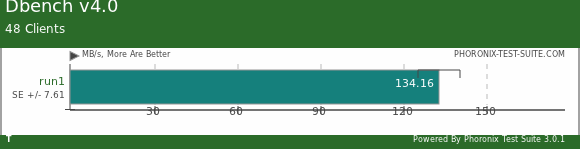

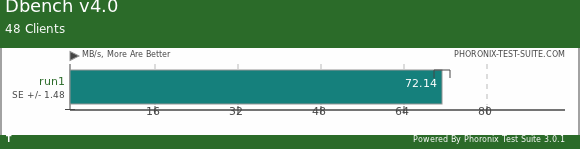

The Dbench benchmarks test file system calls by clients, sort of like how Samba does things. Here, vanilla ext4's performance is cut by 75%, a major set-back in the changes we made.

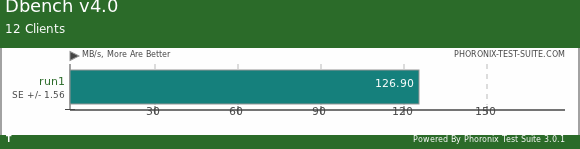

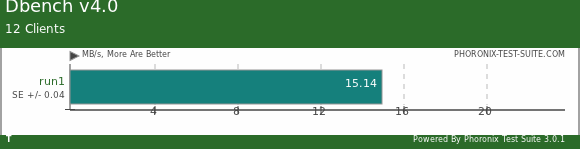

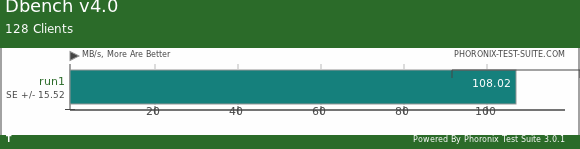

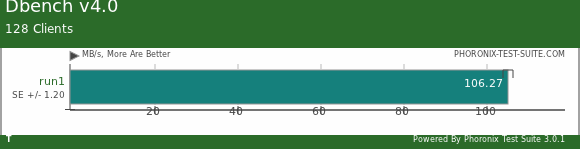

You can see that as the number of clients goes up, the performance discrepancy increases.

With 48 clients, the gap closed somewhat between the two, but there's still a very obvious performance loss by our tweaks.

With 128 clients, the performance is almost the same. You can reason that our tweaks may not be ideal for home use in this kind of operation, but will provide comparable performance when the number of clients is greatly increased.

This test depends on the kernel's AIO access library. we've got a 20% improvement here.

Here, we have a multi-threaded random read of 64MB, and there's a 200% increase in performance here! Wow!

While writing 64MB of data with 32 threads, we still have a 75% increase in performance.

Compile Bench simulates the effect of age on a file system as represented by manipulating kernel trees (creating, compiling, patching, etc.). Here, you can see a significant benefit through the initial creation of the simulated kernel, about 40%.

This benchmarks simply measures how long it takes to extract the Linux kernel. Not too much of an increase in performance here.

Summary

The adjustments we made to Ubuntu's out-of-the-box ext4 configuration did have quite an impact. The biggest performance gains were in the realms of multi-threaded writes and reads, small file reads, and large contiguous file reads and writes. In fact, the only real place we saw a hit in performance was in simple file system calls, something Samba users should watch out for. Overall, it seems to be a pretty solid increase in performance for things like hosting webpages and watching/streaming large videos.

Keep in mind that this was specifically with Ubuntu Natty 64-bit. If your system or SSD is different, your mileage may vary. Overall though, it seems as though the fstab and IO scheduler adjustments we made go a long way to better performance, so it's probably worth a try on your own rig.

Have your own benchmarks and want to share your results? Have another tweak we don't know about? Sound out in the comments!