If you have ever done much comparison shopping for a new CPU, you may have noticed that cores all seem to have the speed rather than a combination of different ones. Why is that? Today's SuperUser Q&A post has the answer to a curious reader's question.

Today’s Question & Answer session comes to us courtesy of SuperUser—a subdivision of Stack Exchange, a community-driven grouping of Q&A web sites.

The Question

SuperUser reader Jamie wants to know why CPU cores all have the same speed instead of different ones:

In general, if you are buying a new computer, you would determine which processor to buy based on the expected workload for the computer. Performance in video games tends to be determined by single core speed, whereas applications like video editing are determined by the number of cores. In terms of what is available on the market, all CPUs seem to have roughly the same speed with the main differences being more threads or more cores.

For example:

- Intel Core i5-7600K, base frequency 3.80 GHz, 4 cores, 4 threads

- Intel Core i7-7700K, base frequency 4.20 GHz, 4 cores, 8 threads

- AMD Ryzen 5 1600X, base frequency 3.60 GHz, 6 cores, 12 threads

- AMD Ryzen 7 1800X, base frequency 3.60 GHz, 8 cores, 16 threads

Why do we see this pattern of increasing cores, yet all cores having the same clock speed? Why are there no variants with differing clock speeds? For example, two "big" cores and lots of small cores.

Instead of, say, four cores at 4.0 GHz (i.e. 4x4 GHz, 16 GHz maximum), how about a CPU with two cores running at 4.0 GHz and four cores running at 2.0 GHz (i.e. 2x4.0 GHz + 4x2.0 GHz, 16 GHz maximum)? Would the second option be as equally good at single threaded workloads, but potentially better at multi-threaded workloads?

I ask this as a general question and not specifically with regard to the CPUs listed above or about any one specific workload. I am just curious as to why the pattern is what it is.

Why do CPU cores all have the same speed instead of different ones?

The Answer

SuperUser contributor bwDraco has the answer for us:

This is known as heterogeneous multi-processing (HMP) and is widely adopted by mobile devices. In ARM-based devices which implement big.LITTLE, the processor contains cores with different performance and power profiles, i.e. some cores run fast but draw lots of power (faster architecture and/or higher clocks) while others are energy-efficient but slow (slower architecture and/or lower clocks). This is useful because power usage tends to increase disproportionately as you increase performance once you get past a certain point. The idea here is to get performance when you need it and battery life when you do not.

On desktop platforms, power consumption is much less of an issue, so this is not truly necessary. Most applications expect each core to have similar performance characteristics, and scheduling processes for HMP systems is much more complex than scheduling for traditional symmetric multi-processing (SMP) systems (technically, Windows 10 has support for HMP, but it is mainly intended for mobile devices that use ARM big.LITTLE).

Also, most desktop and laptop processors today are not thermally or electrically limited to the point where some cores need to run faster than others, even for short bursts. We have basically hit a wall on how fast we can make individual cores, so replacing some cores with slower ones will not allow the remaining cores to run faster.

While there are a few desktop processors that have one or two cores capable of running faster than the others, this capability is currently limited to certain very high-end Intel processors (known as Turbo Boost Max Technology 3.0) and only involves a slight gain in performance for those cores that can run faster.

While it is certainly possible to design a traditional x86 processor with both large, fast cores and smaller, slower cores to optimize for heavily-threaded workloads, this would add considerable complexity to the processor design and applications are unlikely to properly support it.

Take a hypothetical processor with two fast Kaby Lake (7th-generation) cores and eight slow Goldmont (Atom) cores. You would have a total of 10 cores, and heavily-threaded workloads optimized for this kind of processor may see a gain in performance and efficiency over a normal quad-core Kaby Lake processor. However, the different types of cores have wildly different performance levels, and the slow cores do not even support some of the instructions the fast cores support, like AVX (ARM avoids this issue by requiring both the big and LITTLE cores to support the same instructions).

Again, most Windows-based multi-threaded applications assume that every core has the same or nearly the same level of performance and can execute the same instructions, so this kind of asymmetry is likely to result in less-than-ideal performance, perhaps even crashes if it uses instructions not supported by the slower cores. While Intel could modify the slow cores to add advanced instruction support so that all cores can execute all instructions, this would not resolve issues with software support for heterogeneous processors.

A different approach to application design, closer to what you are probably thinking about in your question, would use the GPU for acceleration of highly parallel portions of applications. This can be done using APIs like OpenCL and CUDA. As for a single-chip solution, AMD promotes hardware support for GPU acceleration in its APUs, which combines a traditional CPU and a high-performance integrated GPU into the same chip, as Heterogeneous System Architecture, though this has not seen much industry uptake outside of a few specialized applications.

Have something to add to the explanation? Sound off in the comments. Want to read more answers from other tech-savvy Stack Exchange users? Check out the full discussion thread here.

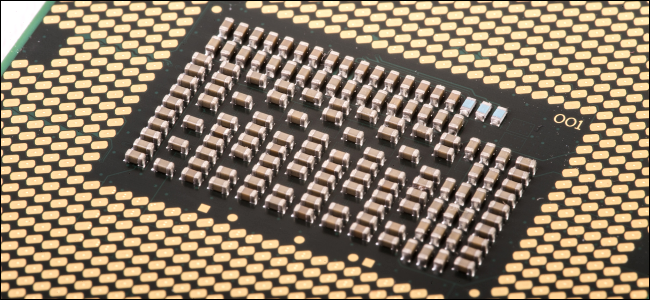

Image Credit: Mirko Waltermann (Flickr)