Being able to compress our files so that it is easier to share and/or transport them can make our electronic lives much easier, but sometimes we may see odd or unexpected sizing results after we compress them. Why is that? Today's SuperUser Q&A post has the answers to a confused reader's questions.

Today’s Question & Answer session comes to us courtesy of SuperUser—a subdivision of Stack Exchange, a community-driven grouping of Q&A web sites.

Photo courtesy of Jean-Etienne Minh-Duy Poirrier (Flickr).

The Question

SuperUser reader sixtyfootersdude wants to know why zip is able to compress single files better than multiple files with the same type of content:

Suppose that I have 10,000 XML files and want to send them to a friend. Before sending them, I would like to compress them.

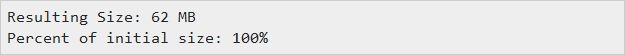

Method 1: Do Not Compress Them

Results:

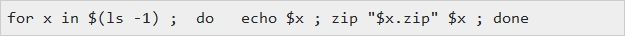

Method 2: Zip Every File Separately and Send Him 10,000 Zipped XML Files

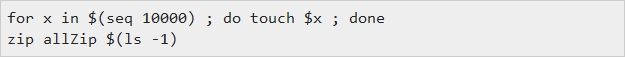

Command:

Results:

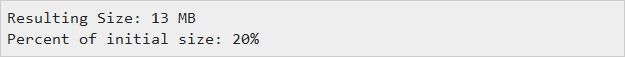

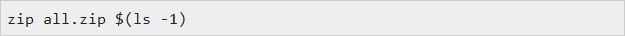

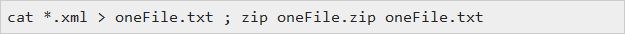

Method 3: Create a Single Zip File Containing All 10,000 XML Files

Command:

Results:

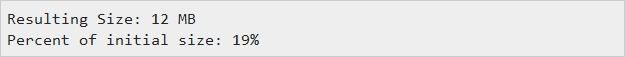

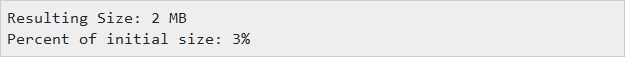

Method 4: Concatenate the Files Into a Single File and Zip It

Command:

Results:

Questions

- Why do I get such dramatically better results when I am just zipping a single file?

- I was expecting to get drastically better results using method 3 rather than method 2, but I do not. Why is this?

- Is this behaviour specific to zip? If I tried using Gzip, would I get different results?

Additional Info

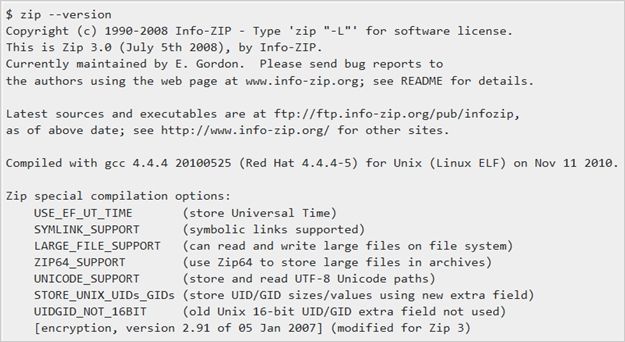

Meta Data

One of the answers given suggests that the difference is the system meta data that is stored in the zip file. I do not believe that this can be the case. To test it, I did the following:

The resulting zip file is 1.4 MB. This means that there is still approximately ten MB of unexplained space.

Why is zip able to compress single files better than multiple files with the same type of content?

The Answer

SuperUser contributors Alan Shutko and Aganju have the answer for us. First up, Alan Shutko:

Zip compression is based on repetitive patterns in the data to be compressed, and the compression gets better the longer the file is, as more and longer patterns can be found and used.

Simplified, if you compress one file, the dictionary that maps (short) codes to (longer) patterns is necessarily contained in each resulting zip file; if you zip one long file, the dictionary is 'reused' and grows even more effective across all content.

If your files are even a bit similar (as text always is), reuse of the 'dictionary' becomes very efficient and the result is a much smaller total zip file.

Followed by the answer from Aganju:

In zip, each file is compressed separately. The opposite is solid compression, that is, files are compressed together. 7-zip and Rar use solid compression by default. Gzip and Bzip2 cannot compress multiple files, so Tar is used first, having the same effect as solid compression.

As xml files have similar structure (and probably similar content), if the files are compressed together then the compression will be higher.

For example, if a file contains the string "<content><element name=" and the compressor has already found that string in another file, it will replace it with a small pointer to the previous match. If the compressor does not use solid compression, the first occurrence of the string in the file will be recorded as a literal, which is larger.

Have something to add to the explanation? Sound off in the comments. Want to read more answers from other tech-savvy Stack Exchange users? Check out the full discussion thread here.