With all the great hardware we have available these days, it seems we should be enjoying great quality viewing no matter what, but what if that is not the case? Today's SuperUser Q&A post seeks to clear things up for a confused reader.

Today’s Question & Answer session comes to us courtesy of SuperUser—a subdivision of Stack Exchange, a community-driven grouping of Q&A web sites.

Photo courtesy of lge (Flickr).

The Question

SuperUser reader alkamid wants to know why there is a noticeable difference in quality between HDMI-DVI and VGA:

I have a Dell U2312HM monitor connected to a Dell Latitude E7440 laptop. When I connect them via laptop -> HDMI cable -> HDMI-DVI adaptor -> monitor (the monitor does not have a HDMI socket), the image is much sharper than if I connect via laptop -> miniDisplayPort-VGA adaptor -> VGA cable -> monitor.

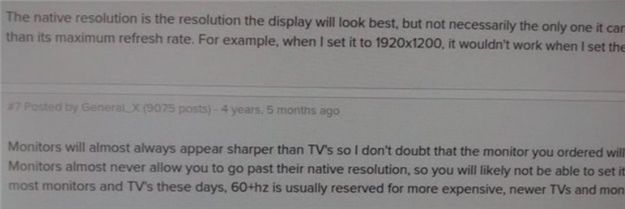

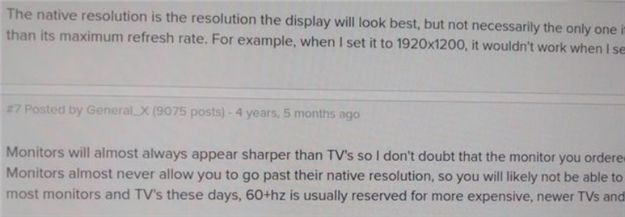

The difference is difficult to capture with a camera, but see my attempt at it below. I tried playing with the brightness, contrast, and sharpness settings, but I cannot get the same image quality. The resolution is 1920*1080 with Ubuntu 14.04 as my operating system.

VGA:

HDMI:

Why is the quality different? Is it intrinsic to these standards? Could I have a faulty VGA cable or mDP-VGA adaptor?

Why is there a difference in quality between the two?

The Answer

SuperUser contributors Mate Juhasz, youngwt, and Jarrod Christman have the answer for us. First up, Mate Juhasz:

VGA is the only analog signal among the ones mentioned above, so it is already an explanation for the difference. Using the adapter can further worsen the quality.

Some further reading: HDMI vs. DisplayPort vs. DVI vs. VGA

Followed by the answer from youngwt:

Assuming that brightness, contrast, and sharpness are the same in both cases, there could be two other reasons why the text is sharper with HDMI-DVI.

The first has already been stated, VGA is analog, so it will need to go through an analog to digital conversion inside the monitor. This will theoretically degrade image quality.

Second, assuming that you are using Windows, there is a technique called ClearType (developed by Microsoft) which improves the appearance of text by manipulating the sub pixels of an LCD monitor. VGA was developed with CRT monitors in mind and the notion of a sub pixel is not the same. Because of the requirement for ClearType to use an LCD screen and the fact that the VGA standard does not tell the host the specifications of the display, ClearType would be disabled with a VGA connection.

I remember hearing about ClearType from one its creators on a podcast for This().Developers().Life() IIRC, but this Wikipedia article also supports my theory. Also HDMI is backward compatible with DVI and DVI supports Electronic Display Identification (EDID).

With our final answer from Jarrod Christman:

The others make some good points, but the main reason is an obvious clock and phase mismatch. VGA is analog and subject to interference and mismatch of the analog sending and receiving sides. Normally one would use a pattern like this:

Then adjust the clock and phase of the monitor to get the best match and the sharpest picture. However, since it is analog, these adjustments may shift over time, and thus you should ideally just use a digital signal.

Have something to add to the explanation? Sound off in the comments. Want to read more answers from other tech-savvy Stack Exchange users? Check out the full discussion thread here.