Your PSU is rated 80 Plus Bronze and for 650 watts, but what exactly does that mean? Read on to see how wattage and power efficiency ratings translate to real world use.

Today’s Question & Answer session comes to us courtesy of SuperUser—a subdivision of Stack Exchange, a community-drive grouping of Q&A web sites.

The Question

SuperUser reader TK Kocheran is curious about power supplies:

If I have a system running at ~500W of power draw, will there be any tangible difference in the outlet wattage draw between a 1200W power supply vs, say, a a 800W power supply? Does the wattage only imply the max available wattage to the system?

What is the difference? And what, for that matter, do the 80 Plus designations mean on modern PSUs?

The Answer

Contributors Mixxiphoid and Hennes share some insight into the PSU labeling methods. Mixxiphoid writes:

The wattage of your power supply is what it could potentially supply. However, in practice the supply won't ever make that. I always count 60% of the capacity as the truly maximum capacity. Today however, there are also bronze, silver, gold, platinum power supplies which guarantee a certain amount (minimum of 80%) of efficiency. See this link for a summary of 80 PLUS labels.

Example: If your 1200W supply has a 80 PLUS label on it, it will supply probably 1200W but will consume 1500W. I think you 800W supply will be sufficient, but it won't guarantee you safety.

Hennes explains the value of a system-appropriate PSU:

The wattage implies the maximum available wattage to the system.

However note that the PSU draws AC power from the wall socket, converts it to some other DC voltages, and provides those to your system. There is some loss during this conversion. How much depends on the quality of your PSU and on how much power you draw from it.

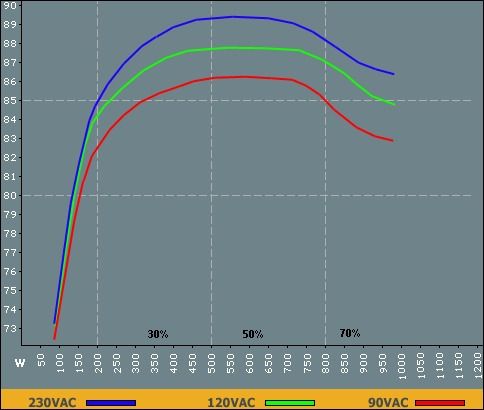

Almost any PSU is very inefficient when you draw less then 20% of max rated power from it. Almost any PSU has less than peak efficiency when you draw close to the max rated power from it. Almost any PSU has their optimum efficiency around 40% to 60% of maximum load.

Thus if you get a PSU which is 'just large enough' or 'way to big' it is likely to be less efficient.

[But note that your PC does not consume a fixed or constant level of power. At idle, when not much is happening, the DC power consumed will be low. Perform a lot of processing and I/O operations, then power demand goes high.]

A nice example of areal world efficiency graph is this:

will there be any tangible difference in the outlet wattage draw between a 1200W power supply vs, say, a a 800W power supply?

The 800 Watt PSU would run at 62.5% of max rating. That is a good value.

The 1200 Watt PSU would run at only 41% of its maximum rating. That is still within the normally accepted range, but at the low end. If your system is not going to change than the 800 Watt PSu is the better choice.

Note that even with a good (bronze+ or silver rated PSU) you are still loosing about 15% during conversion. 15% of 500 Watt means that your computer would use 500 Watt, but the PSU would draw 588 Watt from the wall socket.

Clearly, you should aim to have your PSU sized appropriately for your system--putting a high-load PSU into a basic desktop machine doesn't increase your safety margin and decreases your efficiency costing you more money in the long run.

Have a useful link or comment to add to the discussion? Sound off in the comments below. Want to read more answers from other tech-savvy Stack Exchange users? Check out the full discussion thread here.