Your browser sends its user agent to every website you connect to. We’ve written about changing your browser’s user agent before – but what exactly is a user agent, anyway?

A user agent is a “string” – that is, a line of text – identifying the browser and operating system to the web server. This sounds simple, but user agents have become a mess over time.

The Basics

When your browser connects to a website, it includes a User-Agent field in its HTTP header. The contents of the user agent field vary from browser to browser. Each browser has its own, distinctive user agent. Essentially, a user agent is a way for a browser to say “Hi, I’m Mozilla Firefox on Windows” or “Hi, I’m Safari on an iPhone” to a web server.

The web server can use this information to serve different web pages to different web browsers and different operating systems. For example, a website could send mobile pages to mobile browsers, modern pages to modern browsers, and a “please upgrade your browser” message to Internet Explorer 6.

Examining User Agents

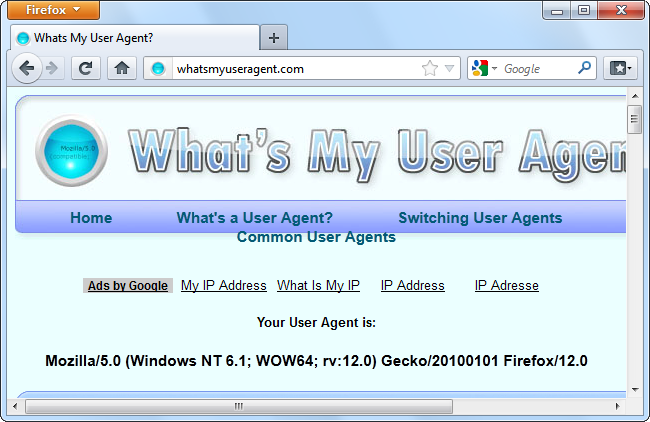

For example, here’s Firefox’s user agent on Windows 7:

Mozilla/5.0 (Windows NT 6.1; WOW64; rv:12.0) Gecko/20100101 Firefox/12.0

This user agent tells the web server quite a bit: The operating system is Windows 7 (code name Windows NT 6.1), it’s a 64-bit version of Windows (WOW64), and the browser itself is Firefox 12.

Now let’s take a look at Internet Explorer 9’s user agent, which is:

Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; WOW64; Trident/5.0)

The user agent string identifies the browser as IE 9 with the Trident 5 rendering engine. However, you might spot something confusing – IE identifies itself as Mozilla.

We’ll come back to that in a minute. First, let’s examine Google Chrome’s user agent, too:

Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/536.5 (KHTML, like Gecko) Chrome/19.0.1084.52 Safari/536.5

The plot thickens: Chrome is pretending to be both Mozilla and Safari. To understand why, we’ll have to examine the history of user agents and browsers.

The User Agent String Mess

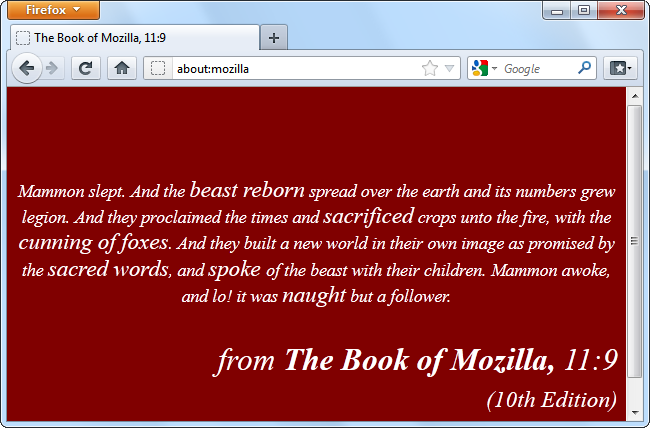

Mosaic was one of the first browsers. Its user agent string was NCSA_Mosaic/2.0. Later, Mozilla came along (later renamed Netscape), and its user agent was Mozilla/1.0. Mozilla was a more advanced browser than Mosaic – in particular, it supported frames. Web servers checked to see that the user agent contained the word Mozilla and sent pages containing frames to Mozilla browsers. To other browsers, web servers sent the old pages without frames.

Eventually, Microsoft’s Internet Explorer came along and it supported frames, too. However, IE didn’t receive web pages with frames, because web servers just sent those to Mozilla browsers. To fix this problem, Microsoft added the word Mozilla to their user agent and threw in additional information (the word “compatible” and a reference to IE.) Web servers were happy to see the word Mozilla and sent IE the modern web pages. Other browsers that came later did the same thing.

Eventually, some servers looked for the word Gecko – Firefox’s rendering engine – and served Gecko browsers different pages than older browsers. KHTML – originally developed for Konquerer on Linux’s KDE desktop – added the words “like Gecko” so they’d get the modern pages designed for Gecko, too. WebKit was based on KHTML – when it was developed, they added the word WebKit and kept the original “KHTML, like Gecko” line for compatibility purposes. In this way, browser developers kept adding words to their user agents over time.

Web servers don’t really care what the exact user agent string is – they just check to see if it contains a specific word.

Uses

Web servers use user agents for a variety of purposes, including:

- Serving different web pages to different web browsers. This can be used for good – for example, to serve simpler web pages to older browsers – or evil – for example, to display a “This web page must be viewed in Internet Explorer" message.

- Displaying different content to different operating systems – for example, by displaying a slimmed-down page on mobile devices.

- Gathering statistics showing the browsers and operating systems in use by their users. If you ever see browser market-share statistics, this is how they’re acquired.

Web-crawling bots use user agents, too. For example, Google’s web crawler identifies itself as:

Googlebot/2.1 (+http://www.google.com/bot.html)

Web servers can give bots special treatment – for example, by allowing them through mandatory registration screens. (Yes, this means that you can sometimes bypass registration screens by setting your user agent to Googlebot.)

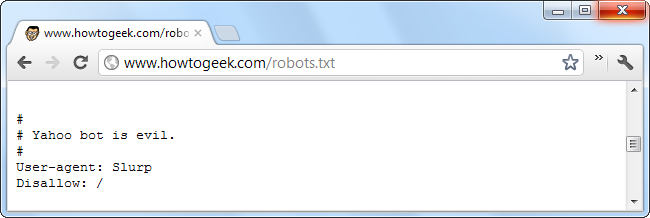

Web servers can also give orders to specific bots (or all bots) using the robots.txt file. For example a web server could tell a specific bot to go away, or tell another bot to only index certain areas of the website. In the robots.txt file, the bots are identified by their user agent strings.

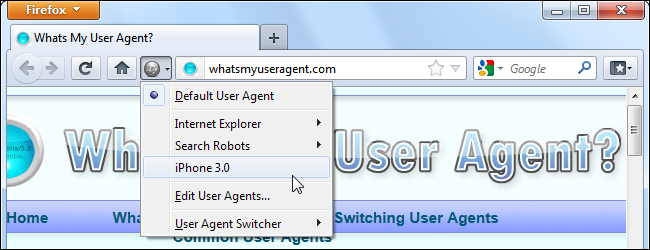

All major browsers contain ways to set custom user agents, so you can see what web servers send to to different browsers. For example, set your desktop browser to a mobile browser's user agent string and you'll see the mobile versions of web pages on your desktop.